Artificial intelligence and national security

By Gregory C. Allen, Taniel Chan | February 21, 2018

Editor’s note: This report was originally published by the Belfer Center for Science and International Affairs at Harvard University’s Kennedy School of Government.

Belfer Center Study

Artificial Intelligence and

National Security

Gregory C. Allen and Taniel Chan

Harvard Kennedy School – Advisor Dr. Joseph Nye Jr.

Harvard Business School – Advisor Dr. Gautam Mukunda

IGA Seminar Leaders – Dr. Matthew Bunn & Dr. John Park

A study on behalf of

Dr. Jason Matheny – Director, IARPA

July 2017

Note: Statements and views expressed in this report are solely those of the authors and do not imply endorsement by Harvard University, the Harvard Kennedy School, Harvard Business School or the Belfer Center for Science and International Affairs. Opinions expressed in this document are solely those of the authors and are not a statement of the policy or views of the U.S. Government or IARPA. This effort is based entirely on unclassified research and conversations.

Project Overview

Partially autonomous and intelligent systems have been used in military technology since at least the Second World War, but advances in machine learning and Artificial Intelligence (AI) represent a turning point in the use of automation in warfare. Though the United States military and intelligence communities are planning for expanded use of AI across their portfolios, many of the most transformative applications of AI have not yet been addressed.

In this piece, we propose three goals for developing future policy on AI and national security: preserving U.S. technological leadership, supporting peaceful and commercial use, and mitigating catastrophic risk. By looking at four prior cases of transformative military technology – nuclear, aerospace, cyber, and biotech – we develop lessons learned and recommendations for national security policy toward AI.

Executive Summary

- Researchers in the field of Artificial Intelligence (AI) have demonstrated significant technical progress over the past five years, much faster than was previously anticipated.

- Most of this progress is due to advances in the AI sub-field of machine learning.

- Most experts believe this rapid progress will continue and even accelerate.

- Most AI research advances are occurring in the private sector and academia.

- Private sector funding for AI dwarfs that of the United States Government.

- Existing capabilities in AI have significant potential for national security.

- For example, existing machine learning technology could enable high degrees of automation in labor-intensive activities such as satellite imagery analysis and cyber defense.

- Future progress in AI has the potential to be a transformative national security technology, on a par with nuclear weapons, aircraft, computers, and biotech.

- Each of these technologies led to significant changes in the strategy, organization, priorities, and allocated resources of the U.S. national security community.

- We argue future progress in AI will be at least equally impactful.

- Advances in AI will affect national security by driving change in three areas:

military superiority, information superiority, and economic superiority.

- For military superiority, progress in AI will both enable new capabilities and make existing capabilities affordable to a broader range of actors.

- For example, commercially available, AI-enabled technology (such as long-range drone package delivery) may give weak states and non-state actors access to a type of long-range precision strike capability.

- In the cyber domain, activities that currently require lots of high-skill labor, such as Advanced Persistent Threat operations, may in the future be largely automated and easily available on the black market.

- For information superiority, AI will dramatically enhance capabilities for the collection and analysis of data, and also the creation of data.

- In intelligence operations, this will mean that there are more sources than ever from which to discern the truth. However, it will also be much easier to lie persuasively.

- AI-enhanced forgery of audio and video media is rapidly improving in quality and decreasing in cost. In the future, AI-generated forgeries will challenge the basis of trust across many institutions.

- For economic superiority, we find that advances in AI could result in a new industrial revolution.

- Former U.S. Treasury Secretary Larry Summers has predicted that advances in AI and related technologies will lead to a dramatic decline in demand for labor such that the United States “may have a third of men between the ages of 25 and 54 not working by the end of this half century.”

- Like the first industrial revolution, this will reshape the relationship between capital and labor in economies around the world. Growing levels of labor automation might lead developed countries to experience a scenario similar to the “resource curse.”

- Also like the first industrial revolution, population size will become less important for national power. Small countries that develop a significant edge in AI technology will punch far above their weight.

- We analyzed four prior cases of transformative military technologies – nuclear, aerospace, cyber, and biotech – and generated “lessons learned” for AI.

- Lesson #1: Radical technological change begets radical government policy ideas.

- As with prior transformative military technologies, the national security implications of AI will be revolutionary, not merely different.

- Governments around the world will consider, and some will enact, extraordinary policy measures in response, perhaps as radical as those considered in the early decades of nuclear weapons.

- Lesson #1: Radical technological change begets radical government policy ideas.

-

- Lesson #2: Arms races are sometimes unavoidable, but they can be managed.

- In 1899, fears of aerial bombing led to an international treaty banning the use of weaponized aircraft, but voluntary restraint was quickly abandoned and did not stop air war in WWI.

- The applications of AI to warfare and espionage are likely to be as irresistible as aircraft. Preventing expanded military use of AI is likely impossible.

- Though outright bans of AI applications in the national security sector are unrealistic, the more modest goal of safe and effective technology management must be pursued.

- Lesson #2: Arms races are sometimes unavoidable, but they can be managed.

-

- Lesson #3: Government must both promote and restrain commercial activity.

- Failure to recognize the inherent dual-use nature of technology can cost lives, as the example of the Rolls-Royce Nene jet engine shows.

- Having the largest and most advanced digital technology industry is an enormous advantage for the United States. However, the relationship between the government and some leading AI research institutions is fraught with tension.

- AI Policymakers must effectively support the interests of both constituencies.

- Lesson #3: Government must both promote and restrain commercial activity.

-

- Lesson #4: Government must formalize goals for technology safety and provide adequate resources.

- In each of the four cases, national security policymakers faced tradeoffs between safety and performance, but the government was more likely to respond appropriately to some risks than to others.

- Across all cases, safety outcomes improved when the government created formal organizations tasked with improving the safety of their respective technology domains and appropriated the needed resources.

- These resources include not only funding and materials, but talented human capital and the authority and access to win bureaucratic fights.

- The United States should consider standing up formal research and development organizations tasked with investigating and promoting AI safety across the entire government and commercial AI portfolio.

- Lesson #4: Government must formalize goals for technology safety and provide adequate resources.

-

- Lesson #5: As technology changes, so does the United States’ National Interest.

- The declining cost and complexity of bioweapons led the United States to change their bioweapons strategy from aggressive development to voluntary restraint.

- More generally, the United States has a strategic interest in shaping the cost, complexity, and offense/defense balance profiles of national security technologies.

- As the case of stealth aircraft shows, targeted investments can sometimes allow the United States to affect the offense/defense balance in a domain and build a long-lasting technological edge.

- The United States should consider how it can shape the technological profile of military and intelligence applications of AI.

- Lesson #5: As technology changes, so does the United States’ National Interest.

- Taking a “whole of government” frame, we provide three goals for U.S. national security policy toward AI technology and provide 11 recommendations.

- Preserve U.S. technological leadership

- Recommendation #1: The DOD should conduct AI-focused war-games to identify potential disruptive military innovations.

- Recommendation #2: The DOD should fund diverse, long-term-focused strategic analyses on AI technology and its implications.

- Recommendation #3: The DOD should prioritize AI R&D spending areas that can provide sustainable advantages and mitigate key risks.

- Recommendation #4: The U.S. defense and intelligence community should invest heavily in “counter-AI” capabilities for both offense and defense.

- Support peaceful use of the technology

- Recommendation #5: DARPA, IARPA, the Office of Naval Research, and the National Science Foundation should be given increased funding for AI-related basic research.

- Recommendation #6: The Department of Defense should release a Request for Information (RFI) on Dual-Use AI capabilities.

- Recommendation #7: In-Q-Tel should be given additional resources to promote collaboration between the national security community and the commercial AI industry.

- Manage catastrophic risks

- Recommendation #8: The National Security Council, the Department of Defense, and the State Department should study what AI applications the United States should seek to restrict with treaties.

- Recommendation #9: The Department of Defense and Intelligence Community should establish dedicated AI-safety organizations.

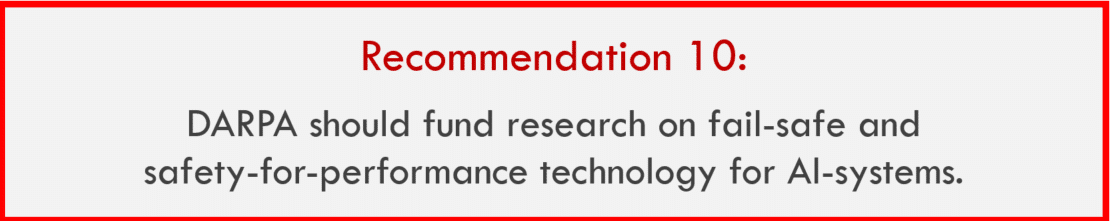

- Recommendation #10: DARPA should fund research on fail-safe and safety-for-performance technology for AI systems.

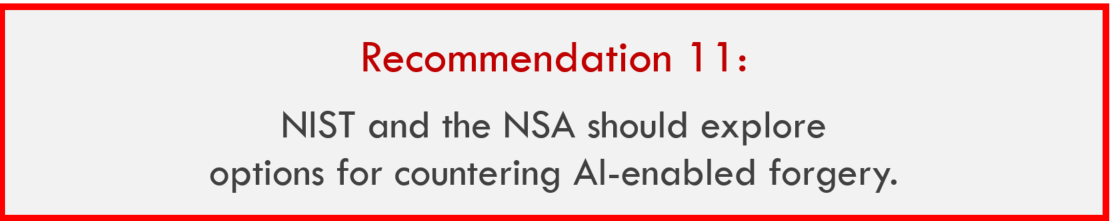

- Recommendation #11: NIST and the NSA should explore technological options for countering AI-enabled forgery.

- Preserve U.S. technological leadership

Introduction & Project Approach

Over the past five years, researchers have achieved key milestones in Artificial Intelligence (AI) technology significantly earlier than prior expert projections.

Go is a board game with exponentially greater mathematical and strategic depth than chess. In 2014, the computer expert who had designed the world’s best Go-playing program estimated that it would be ten more years until a computer system beat a human Go champion.[1] Instead, researchers at DeepMind achieved that goal one year later.[2] Other researchers have since achieved new milestones in diverse AI applications. These include beating professional poker players,[3] reliable voice recognition,[4] image recognition superior to human performance,[5] and defeating a former U.S. Air Force Pilot in an air combat simulator.[6]

There are four key drivers behind the rapid progress in AI technology:

- Decades of exponential growth in computing performance

- Increased availability of large datasets upon which to train machine learning systems

- Advances in the implementation of machine learning techniques

- Significant and rapidly increasing commercial investment

Combined, these trends appear poised to continue delivering rapid progress for at least another decade.[A] Leading commercial technology companies report that they are “remaking themselves around AI.”

Most of the recent and near-future progress falls within the field of Narrow AI and machine learning, specifically. General AI, meaning AI with the scale and fluidity of a human brain, is assumed by most researchers to be at least several decades away.[7]

There are strong reasons to believe – as many senior U.S. defense and intelligence leaders do – that rapid progress in AI is likely to impact national security.

Deputy Secretary of Defense Robert Work, a leader in developing and implementing the Department of Defense’s “Third Offset” strategy, supported this view in a speech at the Reagan Defense forum: “To a person, every single person on the [Defense Science Board Summer Study] said, we can’t prove it, but we believe we are at an inflection point in Artificial Intelligence and autonomy.”[8] Such statements indicate national security leaders are confident that rapid progress in AI technology will continue and will have impact a significant impact on their mission.

The U.S. government has recently sponsored several significant studies on the future of AI and its implications for governance and national security. [B]

These studies are generally concerned with the near-term future of AI and are especially concerned with increased utilization of Deep Learning techniques.

Apart from the Office of Net Assessment’s Summer Study,[9] work to date generally does not focus on the long-term, more transformative implications of AI. This work is intended to assist in closing that gap.

Our Approach – Part 1: Analyzing possible technology development scenarios related to AI and exploring how these might transform national security

In this report, we supplement work to date with greater consideration across three dimensions:

- Greater diversity in potential applications of advances in AI

- Greater analysis of the implications of AI advances beyond what is currently possible or expected to be possible in the next five years

- Greater consideration of what technology management paradigms are best suited for AI and evaluating these in historical context

Our Approach – Part 2: Evaluating prior transformative military technologies in order to generate “lessons learned” for designing responses to the emergence of an important field of technology such as AI

We argue that AI technology is likely to be a transformative military technology, on a par with the invention of aircraft and nuclear weapons. Governments have long competed for leadership over rivals in driving and harnessing technological progress. Though machine learning and AI technology are comparatively young, human and organizational responses to the new technology are often echoes of prior experiences.[10] We believe learning from the past offers significant wisdom with which to guide a future course of action with respect to AI.

Accordingly, we investigate four prior cases of transformative technologies which we believe to be especially instructive and relevant for AI. These are listed in Figure 1.

Our Approach – Part 3: Providing AI-related policy recommendations to preserve U.S. technological leadership, support peaceful AI use, and mitigate catastrophic risk

For each case, we focus on the early decades of these technologies after they began to see military application. During this period, responsible agencies had to develop technology management strategies under significant uncertainty. We examine the nature of the technology, how the government sought to manage its evolution and utilization, and evaluate the results of those efforts through the lens of achieving the following three goals:

These goals are not always necessarily in alignment and may conflict. Nevertheless, they capture what the national security community should seek. Finally, we provide policy recommendations[C] for how the United States national security community should respond to the opportunities and threats presented by AI, including achieving the three goals.

Part 1: The Transformative Potential of Artificial Intelligence

In a modified version of the framework laid out in the Office of Net Assessment AI Summer Study,[11] we analyze AI’s potentially transformative implications across three dimensions: military superiority, information superiority, and economic superiority.

In these we take note of existing technological capabilities and trends and then examine how further improvements in capability and/or decreases in cost might transform national security. We then lay out specific hypotheses for how these trends might interact to produce a transformative scenario.

As an overarching frame, consider this statement from the 2016 White House report on AI: “AI’s central economic effect in the short term will be the automation of tasks that could not be automated before.”[12] The same is true for military affairs. AI will make military and intelligence activities that currently require the efforts of many people achievable with fewer people or without people.

Implications for Military Superiority

In this section, we examine trends in AI that are likely to impact the future of military superiority. In particular, we analyze how future progress in AI technology will affect capabilities in robotics & autonomy and cybersecurity. After establishing key trends and themes, we conclude by laying out scenarios where these capability improvements would result in transformative implications for the future of military superiority.

Robotics & Autonomy

One of the prime uses of robots is to do things that are too dangerous for humans, and fighting wars is about as dangerous as it gets.

- Pedro Domingos, The Master Algorithm [13]

Autonomous systems have been used in warfare since at least WWII. Delegation of human control to such systems has increased alongside improvement in enabling technologies.

Very simple systems that use a sensor to trigger an automatic military action, such as land mines, have been in use for centuries. In recent decades, computers have since taken on more responsibility in the use of force.[14] With the invention of the Norden Bombsight[D] and V-1 buzz bomb in World War II, computer systems were first linked to sensors involved in the dynamic control and application of lethal force.[15] So-called “fire-and-forget” missiles, for example, allow the onboard sensors and computer to guide a missile to its target without further operator communications following initial target selection and fire authorization.[16] The U.S. military has developed directives restricting development and use of systems with certain autonomous capabilities. Chief among these is that humans are to be always “in the loop” and directly make the decisions for all uses of lethal force.[17] [E]

The market size for both commercial and military robotics is increasing exponentially, and unit prices are falling significantly.

According to the Boston Consulting Group, between 2000 and 2015, the worldwide spending on military robotics (narrowly defined as only unmanned vehicles) tripled from $2.4 billion to $7.5 billion and is expected to more than double again to $16.5 billion by the year 2025.[18] Even this rapid growth may understate the true impact of increased adoption due to falling unit prices and the increasing overlap between commercial and military systems.

One type of robot, the Unmanned Aerial Vehicle,[F] otherwise known as a drone, has seen major commercial price declines over just the past few years.[19] Bill Gates has argued that robotics is poised for the same reinforcing cycle of rapid price declines and adoption growth that personal computers experienced.[20] As shown in Figure 2, in the 15 years from 1998 to 2013, the average price of a personal computer fell by 95%.[21] If a high-quality drone that costs $1,000 today were available for only $50 in the future, especially if that drone possessed improved autonomous capabilities, it would transform the cost curve for all sorts of military activity. As Paul Scharre has written, “Ultra-cheap 3D-printed mini-drones could allow the United States to field billions – yes, billions – of tiny, insect-like drones.”[22]

Expanded use of machine learning, combined with market growth and price declines, will greatly expand robotic systems’ impact on national security.

We argue that the use of robotic and autonomous systems in both warfare and the commercial sector is poised to increase dramatically. We concur with Gill Pratt, former DARPA Program Manager and leader of the DARPA Robotics Challenge, who argues that, technological and economic trends are converging to deliver a “Cambrian Explosion” of new robotic systems.[23] The robotic “Cambrian Explosion” is an analogy to the history of life on Earth, specifically the period roughly 500 million years ago in which the pace of evolutionary change, for both diversity and complexity of life forms, increased significantly. Pratt points to several trends, but of particular importance are the improvements in the utilization of machine learning techniques and the ability for these techniques to allow robots to intelligently make decisions based on sensor data. Humans have been able to build self-driving automobiles for as long as they have been able to make automobiles, but they would invariably crash. Only recently has the technology been available to produce autonomous cars that can safely and reliably operate in the real world. The same is true for an incredibly diverse array of robotic systems.

Like the impact of cyber, increased utilization of robotics and autonomous systems will augment the power of both non-state actors and nation states.

The introduction of the cyber domain had benefits for all types of actors. Major states built powerful cyber weapons, conducted extensive cyber-espionage, and enhanced existing military operations with digital networking.

Since cyber capabilities were far cheaper than their non-cyber equivalents,[24] smaller states with less powerful militaries also made use of cyber. Ethiopia and many other governments, for example, used cyber tools to monitor political dissidents abroad.[25] Likewise, hostile non-state actors, including both criminals and terrorists, have made effective use of cyber tools for geographically dispersed activities that would be much more difficult to execute in the physical domain.[26] In the near term, the Cambrian Explosion of robotics and autonomy is likely to have similar impacts for power diffusion as the rise of national security operations in the cyber domain did.

In the short term, advances in AI will likely allow more autonomous robotic support to warfighters, and accelerate the shift from manned to unmanned combat missions.

Initially, technological progress will deliver the greatest advantages to large, well-funded, and technologically sophisticated militaries, just as Unmanned Aerial Vehicles (UAVs) and Unmanned Ground Vehicles (UGVs) did in U.S. military operations in Iraq and Afghanistan. As prices fall, states with budget-constrained and less technologically advanced militaries will adopt the technology, as will non-state actors. This pattern is observable today: ISIS is making noteworthy use of remotely-controlled aerial drones in its military operations.[27] In the future they or other terrorist groups will likely make increasing use of autonomous vehicles. Though advances in robotics and autonomy will increase the absolute power of all types of actors, the relative power balance may or may not shift away from leading nation states.

The size, weight, and power constraints that currently limit advanced autonomy will eventually be overcome, just as smartphones today deliver what used to be supercomputer performance.

Automobile manufacturers expect to be selling fully autonomous vehicles by the year 2021.[28] These cars will have large, expensive, and power-hungry computers onboard, but over time prices will fall, and sizes will shrink. A modern smartphone, which costs $700 and fits in a pocket, is more powerful than the world’s fastest supercomputer from the early 1990s.[29] The processors that will power upcoming autonomous vehicles are much, much closer to those of current phones than they are to current supercomputers (which require their own power plants).

Over the medium to long-term, robotic and autonomous systems are likely to match an increasing set of the technological capabilities that have been proven possible by nature.

We especially like this “Cambrian Explosion” biological analogy because biology is full of intelligent autonomous systems. An “existence proof” is when one acquires the knowledge that a specific technology is possible because one observes it in action. For instance, many militaries around the world first learned that precision-guided-missile (PGM) technology was possible when they saw the technology successfully used by the United States military during the Gulf War in 1991. Most militaries could not themselves build PGMs, but suddenly they knew that PGMs were technologically achievable.

Similarly, the natural world of biology can be considered a set of technological existence proofs for robotics and autonomy. Every type of animal, whether insect, fish, bird, or mammal has a suite of sensors, tools for interacting with its environment, and a high-speed data processing and decision-making center. Humans do not yet know how to replicate all the technologies and capabilities of nature, but the fact that these capabilities exist in nature proves that they are indeed possible. Consider the common city pigeon: the pigeon has significantly more flight maneuverability, better sensors, faster data processing capability, and greater power efficiency than any comparable drone. The combination of a pigeon’s brain, eyes, and ears is also superior at navigation and collision avoidance than any autonomous car, despite requiring less than one watt of power to function.[30] Humans do not know what the ultimate technological performance limit for autonomous robotics is, but the ultimate limit can be no lower than the very high level of performance that nature has proven possible with the pigeon, the goose, the mouse, the mosquito, the dolphin, etc.

Over the long term, these capabilities will transform military power and warfare.

Autonomous robots are unlikely to match all the technology and performance of nature in the next decade or two. Nevertheless, the robotic systems that are possible will be capable enough to transform military power. Human-developed technology can do things that nature’s engineering approach cannot, such as adapting capabilities from one system to another. A hypothetical robotic “bird” could also possess night vision or a needle for injecting venom. Even the most advanced robots are far from achieving this combination of capabilities and performance today, but given that these technologies exist in nature, there is no reason in principle why advanced military robots could not possess these capabilities. Robots can also make use of technologies that do not exist in nature, such as radar, explosives, ballistics, and digital telecommunications.

Cybersecurity & Cyberwar

Top U.S. national security officials believe that AI and machine learning will have transformative implications for cybersecurity and cyberwar.

In response to a question from the authors of this report, Admiral Mike Rogers, the Director of the National Security Agency and Commander of U.S. Cyber command, said “Artificial Intelligence and machine learning – I would argue – is foundational to the future of cybersecurity […] We have got to work our way through how we’re going to deal with this. It is not the if, it’s only the when to me.”[31] We agree.

As with all automation, AI and machine learning will decrease the number of humans needed to perform specific tasks in the cyber domain.

The advent of cyber tools dramatically increased the productivity of individuals engaged in espionage. As Bruce Schneier of Harvard University points out, “the exceptionally paranoid East German government had 102,000 Stasi surveilling a population of 17 million: that’s one spy for every 166 citizens.”[32] By comparison, using digital surveillance, governments and corporations can surveil the digital activities of billions of individuals with only a few thousand staff. Increased adoption of AI in the cyber domain will further augment the power of those individuals operating and supervising these tools and systems.

AI will be useful in bolstering cyber defense, since probing for weaknesses and monitoring systems can be enhanced with intelligent automation.

DARPA is currently working on systems that will bring AI into cyber defense. These include techniques for automatically detecting software code vulnerabilities prior to release and using machine learning to detect deviations from normal network activity.[33] Cyber defense is currently quite labor intensive and skilled cyber labor is in short supply. Additionally, AI will enable new paradigms for cyber defense. Most cyber defense systems today are based on a priori knowledge assumptions, in which the defender has optimized their system to address known threats, and is less well protected against unknown threats. AI and machine learning might allow systems to not only learn from past vulnerabilities, but also observe anomalous behavior to detect and respond to unknown threats.[34]

However, the same logic suggests AI advances will enable improvements in cyber offense.

For cybersecurity, advances in AI pose an important challenge in that attack approaches today that are labor-and-talent constrained may – in a future with highly-capable AI – be merely capital-constrained. The most challenging type of cyberattack, for most organizations and individuals to deal with, is the Advanced Persistent Threat (APT). With an APT, the attacker is actively hunting for weaknesses in the defender’s security and patiently waiting for the defender to make a mistake. This is a labor-intensive activity and generally requires highly-skilled labor. With the growing capabilities in machine learning and AI, this “hunting for weaknesses” activity will be automated to a degree that is not currently possible and perhaps occur faster than human-controlled defenses could effectively operate. This would mean that future APTs will be capital-constrained rather than labor-and-talent constrained. In other words, any actor with the financial resources to buy an AI APT system could gain access to tremendous offensive cyber capability, even if that actor is very ignorant of internet security technology. Given that the cost of replicating software can be nearly zero, that may hardly present any constraint at all.

Near term, bringing AI technology applications into the cyber domain will benefit powerful nation-state actors. Over the long term, power balance outcomes are unclear, as is the long-term balance between cyber offense and defense.

To some extent there is already a market for the services of skilled cyber criminals. However, there are many people who refuse to serve as hitmen but are willing to sell guns. We should therefore be concerned about AI advances making cyber “guns” much more capable and autonomous. Developing cyber weapons includes the difficult steps of weaponizing undetected vulnerabilities, customizing software to have the desired effects, and engineering the weapons to avoid defenses. As AI-related cyber techniques improve, a greater and greater portion of the operations may be amenable to automation.[35] If true, the Stuxnet of the future may not require tens or hundreds of millions of dollars to develop and launch but merely hundreds or thousands of dollars as the steps requiring high-skill human cyber operator customization are reduced or eliminated through AI. At that point, most software can be reproduced at near-zero marginal cost.

Applications of AI therefore have exceptional abilities to strengthen the cyber capabilities of powerful nation-states, small states, and non-state actors. There is no obvious, stable outcome in terms of state vs. non-state power or offense vs. defense cyber advantage. It will depend on the balance of research and development investments by all actors, the pace of technological process, and underlying limitations in economics and technology.

Potential Transformative Scenarios

The trends and themes described above could combine to create a military power landscape very different from what exists today. Below, we provide ten scenarios by which the growing capabilities of AI could transform military power. These are not meant as firm predictions. Rather, they are intended to be provocative and to demonstrate how wide the range of possible outcomes is – given current trends. Moreover, they are not mutually exclusive alternatives. More than one or several could potentially happen simultaneously.

- Lethal autonomous weapons form the bulk of military forces.

For nearly eight decades, as automatic and autonomous systems have become more capable, militaries have become more willing to delegate authority to them.[36] Given that an AI-based pilot running on a $35 computer has already demonstrated the ability to beat a U.S. Air Force-trained fighter pilot in a combat simulator,[37] many actors will face increasing temptation to delegate greater levels of authority to a machine, or else face defeat. The Russian Military Industrial Committee has approved an aggressive plan that would have 30% of Russian combat power consist of entirely remote-controlled and autonomous robotic platforms by 2030.[38] [39] [G] Other countries facing demographic and security challenges are likely to set similar goals. For example, Japan and Israel, which have highly advanced technology sectors and unique demographic challenges, may find lethal autonomous weapons especially appealing. The United States Department of Defense has enacted restrictions on the use of autonomous and semi-autonomous systems wielding lethal force. Other countries and non-state actors may not exercise such self-restraint. - Disruptive swarming technologies render some military platforms obsolete.

As of 2013, The United States possessed 14,776 military aircraft, some of which cost more than $100 million per unit.[40] A high-quality quadcopter UAV currently costs roughly $1,000, meaning that for the price of a single high-end aircraft, a military could acquire one million drones. If the robotics market sustains current price decline trends, in the future that figure might become closer to one billion. In such a scenario, drones would be even cheaper than some ballistic munitions are today, e.g. ~$150 per 155mm shell.

Commercial drones currently face significant range and payload limitations but become cheaper and more capable with each passing year. Imagine a low-cost drone with the range of a Canada Goose, a bird which can cover 1,500 miles in under 24 hours at an average speed of 60 miles per hour.[41] How would an aircraft carrier battlegroup respond to an attack from millions of aerial kamikaze explosive drones? Some of the major platforms and strategies upon which U.S. national security currently relies might be rendered obsolete.

- Robotic assassination is common and difficult to attribute.

The low-cost of cyber has given offense the edge for targeted digital attacks. Widespread availability of low-cost, highly-capable, lethal, and autonomous robots could make targeted assassination more widespread and more difficult to attribute. A small, autonomous robot could infiltrate a target’s home, inject the target with a lethal dose of poison, and leave undetected. Alternatively, automatic sniping robots could assassinate targets from afar.

- Mobile-robotic-IEDs give low-cost, PGM-like capabilities to terrorists.

Improvised Explosive Devices (IED) posed a significant challenge to U.S. forces in Iraq because they were low-cost, easily manufactured, and could cause significant damage. As commercial robotic and autonomous vehicle technology becomes widespread, some groups will leverage this to make more advanced IED technology. For example, the technological capability to rapidly deliver explosives to a precise target from many miles away is currently restricted to powerful nation states who sometimes spend millions of dollars for each Precision Guided Munition (PGM). If long distance package delivery by drone becomes a reality, the cost of precisely delivering explosives from afar would fall from millions of dollars to thousands or even hundreds. Similarly, self-driving cars could make suicide car bombs more frequent and devastating since they no longer require a suicidal driver.

- Military power grows disconnected from population size and economic strength.

The CIA World Factbook still counts the number of combat-age males in a country as one of the elements for determining a country’s military potential. In the future, however, even countries with small, elderly, and declining populations may be able to use robotics and autonomy to possesses robotic “manpower” far beyond their human population size. Consider South Korea: after Google DeepMind’s AlphaGo system defeated the South Korean Go Champion Lee Sedol, South Korea’s government announced that it would spend nearly $1 billion over the next five years on AI research and development.[42] Including government in-kind contributions and reprogrammed funds, South Korea’s annual AI R&D spending may reach $1 billion within the next year or two.[43]

If South Korea does reach such a figure, it would match the 2015 AI R&D budget of the United States, a country with a nearly fifteen-fold larger economy. Though such a scenario is speculative, it is possible that a technologically advanced country with a smaller population, such as South Korea, could build a significant advantage in AI based military systems and thereby field greater numbers of more capable robotic “warfighters” than some more populous adversaries.

- Cyberweapons are frequently used to kill.

The linkage of digital and physical systems will expand the number of possibilities for killing with cyberweapons. A self-driving car could be hacked and made to crash on the highway.[44] While lethal cyberattacks are possible without AI, AI will change the situation in two ways: First, capabilities might make it possible or even easy to execute such attacks at scale and possible for well-funded actors with limited cyber expertise to perpetrate. Second, the growth of AI applications will help bring more hackable things into the physical world. - Most actors in cyber space will have no choice but to enable relatively high levels of autonomy, or else risk being outcompeted by “machine-speed” adversaries.

There are some sectors of military power where high levels of autonomy are a pre-requisite for success. Missile defense, for instance, cannot always wait for human operators to individually target and approve the launching of each counter-missile. Similarly, AI cyber defense will have to be given high levels of autonomy to respond to high speed cyberattacks or else risk being overwhelmed. In recent years, some attackers of government networks have attempted to maintain their presence even after discovery, actively fighting with the United States for control.[45] Machine-speed AI defenders or attackers would likely have an advantage in this sort of virtual “hand to hand combat”[46] since they operate at gigahertz speed. As with missile defense, those defenders unwilling to turn over control to AI, will simply lose out to attackers who are more willing to do so.[47] - Unexpected interactions of autonomous systems cause occasional “flash crashes.”

Autonomous systems can make decisions incredibly rapidly, much faster than humans can monitor and restrain them without the aid of machines. Because of autonomous systems’ high speed, unexpected interactions and errors can spiral out of control rapidly. One ominous example is the stock market “Flash Crash” of May 2010, which the U.S. Securities and Exchange Commission reported was enabled and exacerbated by use of autonomous financial trading systems.[48] In the Flash Crash, one trillion dollars of stock market value was wiped out within minutes because of unintended machine interactions (emergent effects). One must consider the cybersecurity or autonomous vehicle equivalent of a flash crash.

The system verification and validation process for autonomous systems that leverage machine learning is still in its relative infancy, and the flash crash suggests that even systems which perform better than humans for 99%+ of their operations may occasionally have catastrophic, unexpected failures. This is especially worrisome given the adversarial nature of warfare and espionage. Pedestrians and other drivers want autonomous vehicles to be successful and safe. The military adversaries of robotic systems, like those in financial markets, will be less kind.

- Involving machine learning in military systems will create new types of vulnerabilities and new types of cyberattacks that target the training data of machine learning systems.

Since machine learning systems rely upon high-quality datasets to train their algorithms, injecting so-called “poisoned” data into those training sets could lead AI systems to perform in undesired ways. For instance, researchers have proven that an adversary with access to a deep neural network image classifier’s training data, could expose it to data that the classifier would systematically miscategorize.[49] One could imagine a more extreme data poisoning attack that would lead a sensor to falsely recognize friend as foe or foe as not present at all. Such manipulations are possible with existing cyber systems, but as we increase use of machine learning, the nature of the attack will change. Given rising levels of autonomy, the impact of an attack might also increase significantly. - Theft and replication of military and intelligence AI systems will result in AI cyberweapons falling in the wrong hands.

In aerospace or other technologies, stealing the blueprints for a weapon does not actually give the thief access to the weapon or even a guaranteed ability to develop one. As one of us wrote in a previous article[50] for Vox:

When China stole the blueprints and R&D data for America’s F-35 fighter aircraft, for example, it likely shaved years off the development timeline for a Chinese F-35 competitor. But, China didn’t actually acquire a modern jet fighter or the immediate capability to make one. That’s because aerospace manufacturing is incredibly difficult, and China can’t yet match US competence in this area.[51] But when a country steals the code for a cyberweapons, it has stolen not only the blueprints, but also the tool itself — and it can reproduce that tool at near zero-marginal cost.

In the cyber domain, groups have reportedly stolen access to U.S. government cyber tools and used them to infect hundreds of thousands of computers for criminal purposes.[52] Cyber tools utilizing AI may also share this property, and the result – especially if offense-dominance remains the case – would be that highly-destructive AI cyberweapons could be widely available and difficult to control.

Hacking of robotic systems might also pose a serious risk. Paul Scharre has pointed out that autonomous weapons “pose a novel risk of mass fratricide, with large numbers of weapons turning on friendly forces […] This could be because of hacking, enemy behavioral manipulation, unexpected interactions with the environment, or simple malfunctions or software errors.”[53]

Implications for Information Superiority

If World War III will be over in seconds, as one side takes control of the other’s systems, we’d better have the smarter, faster, more resilient network.

- Pedro Domingos, The Master Algorithm [54]

In this section, we examine trends in Artificial Intelligence that are likely to impact the future of information superiority. In particular, we analyze how future progress in AI technology will affect capabilities of intelligence collection and analysis of data, and the creation of data and media. We believe the latter set of capabilities will have significant impacts on the future of propaganda, strategic deception, and social engineering. After establishing the key trends and themes, we conclude by laying out scenarios where these capability improvements would result in transformative implications for the future of information superiority.

Collection & Analysis of Data

U.S. Intelligence agencies are awash in far more potentially useful raw intelligence data than they can analyze.

According to a study by EMC Corporation, the amount of data stored on Earth doubles every two years, meaning that as much data will be created over the next 24 months as over the entire prior history of humanity.[55] Most of this new data is unstructured sensor or text data and stored across unintegrated databases. For intelligence agencies, this creates both an opportunity and a challenge: there is more data to analyze and draw useful conclusions from, but finding the needle in so much hay is tougher. The Intelligence Agencies of the United States each day collect more raw intelligence data than their entire workforce could effectively analyze in their combined lifetimes.[56]

Computer-assisted intelligence analysis, leveraging machine learning, will soon deliver remarkable capabilities, such as photographing and analyzing the entire Earth’s surface every day.

Analysts must prioritize and triage which collected information to analyze, and they leverage computer search and databases to increase the amount of information that they can manage. Some datasets that were previously only analyzable by human staff, such as photos, are newly amenable to automated analysis based on machine learning. In 2015, image recognition systems developed by Microsoft and Google outperformed human competitors at the ImageNet challenge.[57] These machine learning-based techniques are already being adapted by U.S. intelligence agencies to automatically analyze satellite reconnaissance photographs,[58] which may make it possible for the United States to image and automatically analyze every square meter of the Earth’s surface every single day.[59] Since machine learning is useful in processing most types of unstructured sensor data, applications will likely extend to most types of sensor-based intelligence, such as Signals Intelligence (SIGINT) and Electronic Intelligence (ELINT). Machine learning-based analysis is also useful for analyzing and deriving meaning from unstructured text.

Creation of Data and Media

AI applications can be used not only to analyze data, but also to produce it, including automatically-generated photographs, video, and text.

Researchers have demonstrated rapid progress in the ability of AI to generate content. Existing AI-related capabilities include but are not limited to the following:

-

- Realistically changing the facial expressions and speech-related mouth movements of an individual on video in real-time, using only a retail-consumer webcam[H] [60]

- Generating a realistic-sounding, synthetic voice recording of any individual for whom there is sufficient training data, so-called “Photoshop for Audio” [I] [61]

- Producing realistic, fake images based only on a text description [62]

- Producing written news articles based on structured data such as political polls, election results, financial reports and sports game statistics [63]

- Creating a 3D representation of an object (such as a face) based on one or more 2D images [J] [64]

- Automatically producing realistic sound effects to accompany a silent video [K] [65]

In the near future, it will be possible even for amateurs to generate photo-realistic HD video, audio, and document forgeries – at scale.

Today, many of these AI-forgery capabilities are real enough that they can sometimes fool the untrained eye and ear. In the near future, they will be good enough to fool at least some types of forensic analysis. Moreover, these tools will be available not only to advanced computer scientists, but to anyone, unless the government effectively restricts their availability. [L] When tools for producing fake-video at higher quality than today’s Hollywood Computer-Generated Imagery (CGI) are available to untrained amateurs, these forgeries might comprise a large part of the information ecosystem.

The existence of widespread AI forgery capabilities will erode social trust, as previously reliable evidence becomes highly uncertain.

Since the invention of the photographic camera in the mid-1900s, the technology for capturing highly reliable evidence has been significantly cheaper and more available than the technology for producing convincing forgeries. Today, every individual with a smartphone can record HD video of events to which they bear witness. Moreover, most people can today also generally (though not always) tell when a video they are looking at is fake. Currently, producing high-quality fake video is extremely expensive. Hollywood movies spend tens of millions of dollars to produce believable CGI, and still many fans occasionally complain that the images look fake.[66] This will change. As one of us wrote in an article for WIRED,[67]

Today, when people see a video of a politician taking a bribe, a soldier perpetrating a war crime, or a celebrity starring in a sex tape, viewers can safely assume that the depicted events have actually occurred, provided, of course, that the video is of a certain quality and not obviously edited.

But that world of truth—where seeing is believing—is about to be upended by artificial intelligence technologies […]

When tools for producing fake video perform at higher quality than today’s CGI and are simultaneously available to untrained amateurs, these forgeries might comprise a large part of the information ecosystem. The growth in this technology will transform the meaning of evidence and truth in domains across journalism, government communications, testimony in criminal justice, and, of course, national security.

We will struggle to know what to trust. Using cryptography and secure communication channels, it may still be possible to, in some circumstances, prove the authenticity of evidence. But, the “seeing is believing” aspect of evidence that dominates today – one where the human eye or ear is almost always good enough – will be compromised.

Potential Transformative Scenarios

As the above analysis indicates, AI is useful both for using data to arrive at conclusions and for generating data to induce false conclusions. In other words, AI can assist intelligence agencies in determining the truth, but it also makes it easier for adversaries to lie convincingly. Which of these two features predominates is likely to shift back and forth with specific technological advances. Below, we outline six possible scenarios for how AI capabilities could transform the future of information superiority. We acknowledge that some of these are mutually exclusive. Our aim is to show how wide the range of possible transformative outcomes is, not to flawlessly forecast the future.

1. Supercharged surveillance brings about the end of guerilla warfare.

There is a plausible “winner-take-all” aspect to the future of AI and surveillance, especially for nation-states. Terrorist and guerrilla organizations will struggle to plan and execute operations without leaving dots that nation-states can collect and connect. Imagine, for instance, if the United States could have placed low-cost digital cameras with facial recognition and the robotic equivalent of a bomb-sniffing dog’s nose[68] every 200 yards on every road in Iraq during the height of U.S. operations. If robotics and data processing continue their current exponential price declines and capability growth, this sort of AI-enhanced threat detection system might be possible. If it did exist, guerilla warfare and insurgency as we know it today might be impossible.

2. A country with a significant advantage in AI-based intelligence analysis achieves decisive strategic advantage decision-making and shaping.

Over the longer term, AI offers the potential to effectively fuse and integrate the analysis of many different types of sensor data sources into a more unified source of decision support. The Office of Net Assessment Summer Study astutely compared the potential of AI intelligence support to the advantage that the United Kingdom and its allies possessed during World War II once they had decrypted the Axis Enigma and Purple codes. [69]

3. Propaganda for authoritarian and illiberal regimes increasingly becomes indistinguishable from the truth.

Given the ease of producing forgeries using AI, regimes that control official media will be able to produce high quality forgeries to shape public perceptions to a degree even greater than today. Supposedly “leaked” videos could be produced of hostile foreign leaders shouting offensive phrases or ordering atrocities. Though forged media will also be produced against authoritarian regimes, state control of media and social media censorship might limit its ability to be disseminated.

4. Democratic and free press difficulty with fake news gets dramatically worse.

The primary problem with fake news today is that it fools individual citizens and voters. In the future, even high-quality journalist institutions and governments will face persistent difficulty in separating fake news from reality. Because of a flood of high-quality forgeries, even the best news organizations will sometimes report hoaxes as real and fail to report real news because they are tricked into believing that it is fake.

5. Command and Control organizations face persistent social engineering threats.

Widely available AI-generated forgeries will pose a challenge for Command and Control organizations. Those giving and receiving orders will struggle to know which communications (written, video, audio) are authentic. Social engineering hacks,[M] which are analogous to digital hacking but target people instead of computers, might be a much greater problem in the future. Allowing an individual in a video or audio phone call to assume the likeness and voice of someone they are impersonating adds another significant layer of difficulty to validating communications. One can imagine an adversary impersonating a military or intelligence officer and ordering the sharing of sensitive information or taking some action that would expose forces to vulnerability. AI could be used to produce counterfeit versions of DOD Directives and statements of policy and to disseminate them widely across the internet. Adversaries of a military could use these technologies to produce large quantities of forged evidence purporting to show that the military has engaged in war crimes.

6. Combined with cyberattacks and social media bot networks, AI-enabled forged media threatens the stability of an economy or government regime.

On April 23, 2013, hackers took control of the Associated Press’ official Twitter account and tweeted “BREAKING: Two Explosions in the White House and Barack Obama is injured” to the account’s nearly two million followers.[70] In the two minutes following the tweet, the U.S. stock market lost nearly $136 billion in value until the hack was revealed.[71] With AI-enabled forgery, one could imagine a future, more devastating hack: Hackers would take control of an official news organization website or social media account being used to spread not only false text, but also false video and audio. A network of social media bots could then be used to spread the fake messaging rapidly and influence a broad number of individuals. Exactly this sort of social media botnet influencing approach was reportedly used by Russia in its attempt to influence the outcome of the 2016 U.S. presidential election.[72]

To some extent this problem is not new. For instance, in 2014, some of the images, circulated widely on social media, that claimed to depict Israel’s airstrikes on Gaza in 2014 were photographs of the more extensive violence from conflicts in Syria and Iraq.[73] However, if forged evidence were sufficiently compelling and effectively disseminated, it might result in stock market crashes, riots, or worse. One way this might be executed by an adversary would be to acquire thousands of real (and sensitive) documents through cyber-espionage and then leak the real documents alongside a few well executed forgeries which could then be supported by “leaked” forged audio and video. Even if the government offered widespread denials and produced contradicting evidence, still it would struggle to squash the false understanding in a population that such an operation could bring about. The government would also face major difficulty in limiting and remediating the potentially significant consequences of that false understanding.

Implications for Economic Superiority

In the same way that a bank without databases can’t compete with a bank that has them, a company without machine learning can’t keep up with one that uses it […] It’s about as fair as spears against machine guns. Machine learning is a cool new technology, but that’s not why businesses embrace it. They embrace it because they have no choice.

- Pedro Domingos, The Master Algorithm [74]

In this section, we examine trends in Artificial Intelligence that are likely to impact the future of economic superiority. In particular, we analyze how future progress in AI technology will affect the speed of technological innovation, and the how increases in automation will affect employment. After establishing key trends and themes, we conclude by laying out scenarios where these capability improvements would result in transformative implications for the future of economic superiority.

Innovation Supercharger

Artificial Intelligence might be a uniquely transformative economic technology, since it has the potential to dramatically accelerate the pace of innovation and productivity growth.

Many advancements in the domain of AI have the character of general purpose technologies, meaning that they enhance productivity across a broad swath of different industries. AI applications can do more, however. They can accelerate the pace of inventing and innovation itself. Consider three examples:

- Automation of scientific experiments: researchers developed a robotic system that can autonomously develop scientific genomic hypotheses, conduct scientific biology experiments to test the hypotheses, and then reach conclusions about the hypothesis that informs the next generation of hypothesis formation.[75]

- Synthesizing findings in thousands of scientific papers: A partnership between the Barrow Neurological Institute and IBM resulted in an AI system that used language processing algorithms to analyze thousands of peer-reviewed research articles related to a neurodegenerative disease and then correctly predicted five previously unknown genes related to the disease.[76]

- Automatically generating and optimizing engineering designs: machine learning algorithms supported by advanced mechanical simulation have proven useful in developing new designs for mechanical equipment, including car engines.[77]

These examples show that developing a leading technological position in conducting AI research will likely deliver benefits to the pace of research and development progress in many fields, including AI. AI applications can therefore act as an “innovation supercharger.”

Automation and Unemployment

The 2016 White House Report on Artificial Intelligence, Automation, and the Economy found that increasing automation will threaten millions of jobs[78] and that future labor disruptions might be more permanent than previous cases.

Automation has always led to the destruction of jobs. After the invention of the mechanized tractor, for example, agricultural labor in the United States began a permanent decline. Farming work today is performed by only 1% of the American population (3.2 million). In 1920, farming labor comprised 30% of the population (32 million).[79]

What is different today, according to the White House report, is the speed of the economic disruption. Economic theory suggests that the increased productivity through automation should ultimately also decrease prices and provide consumers more disposable income with which to generate demand for other goods, services and the workers that provide them.[80] This price effect can be slow, however, especially in comparison to the pace of job loss and the length of time required to retrain displaced workers.

It may be the case, however, that large populations of workers lose their jobs due to automation and thereafter face a dearth of new job opportunities. Former U.S. Treasury Secretary Larry Summers has indicated credence for this view: “This question of technology leading to a reduction in demand for labor is not some hypothetical prospect … It’s one of the defining trends that has shaped the economy and society for the last 40 years” he said in a June 2017 interview. More worryingly, however, Summers went on to posit the following dire scenario:

“I suspect that if current trends continue, we may have a third of men between the ages of 25 and 54 not working by the end of this half century, because this is a trend that shows no sign of decelerating. And that’s before we have … seen a single driver replaced [by self-driving vehicles] …, not a trucker, not a taxicab driver, not a delivery person. … And yet that is surely something that is en route.”[81]

Notably, the one-third unemployment rate that Summers predicts is higher than either the United States or Germany faced at the height of the Great Depression.[82] If Summers’ scenario comes to pass, the political stability and national security consequences could be dire.

One worst case scenario, which is not included in the White House report but is taken seriously by some economists and computer scientists, is that the next wave of automation will leave many workers around the world in the same position that horses faced during the mechanized agriculture and transportation revolutions[83] – unable to remain economically competitive with machines at any price and unable to acquire new, economically useful skills. Human farm laborers successfully retrained to work in other industries when the need for farm labor declined. Horses could not. In 1900, there were 21 million horses and mules in the United States, mostly for animal labor. By 1960, there were fewer than 3 million.[84] If artificial intelligence significantly and permanently reduces demand for human unskilled labor, and if significant portions of the unskilled labor workforce struggle to retrain for economically valuable skills, the economic and social impacts would be devastating.

If AI does lead to permanent worker displacement, technologically advanced countries may face the “Resource Curse” problem, whereby the owners of productive capital are highly concentrated, and economics and politics become unstable.

The Resource Curse problem refers to a diverse and robust set of economic analyses that show countries where natural resources comprise a large portion of the economy tend to be less developed and more unstable than countries with more diversified economies. For instance, one extensive study of the topic found that “between 1960 and 1990, the per capita incomes of resource-poor countries grew two to three times faster than those of resource-abundant countries.”[85] The main mechanisms for the Resource Curse (as it applies to natural resource wealth) are summarized below:

- The composition of extractive industries promotes inequality and poor governance: Extractive industries, such as mining, are capital-intensive and labor-light relative to their scale in the economy. These characteristics imply that a small number of people reap outsized benefits of resource exports.

- Redistribution of resource revenues risks government corruption: By taxing extractive industries, the government raises significant revenues which it can then use to provide public goods such as infrastructure and services. Though potentially beneficial, this allocative model of wealth encourages corruption and weak institutions since those with power will be tempted to allocate capital based on political imperatives rather than in accordance with long term economic goals.

- Inequality promotes political and civil conflict: The outsized concentration of national wealth in relatively few areas encourages conflict over who will control those resources rather than collaboration over how to promote sustainable economic growth overall. For example, Sierra Leone’s decades of war prior to 2000 were fueled by conflict over which faction would control the country’s diamond mines.

- Success in the natural resource export sectors harms other industries: Increased demand for a country’s natural resource exports causes pressure on its currency to appreciate. The more valuable domestic currency in turn makes other export sectors – such as manufacturing and agriculture – more expensive and less competitive. Domestic-focused producers are also harmed as the stronger domestic currency makes imports cheaper.

Though there are interesting parallels between the resource curse and how automation might enable consolidation of control over the economy, there are also important differences. Most notably, production and consumption of the natural resources typically associated with the resource curse (e.g. oil) is relatively inelastic, meaning large change in the price of a good might only result in a modest change in production or consumption. Further study is needed on this issue.

Potential Transformative Scenarios

- Automation-induced “Resource Curse” plagues technologically developed economies.

Though speculative, some have argued that Resource Curse mechanisms would operate in a country where the owners of automation capital (in both manufacturing and service sectors) were concentrated among elites, and labor was comparatively weak in its bargaining power. To illustrate, consider the trajectory of the first industrial revolution. At the beginning, the productivity of both labor and capital increased significantly, but worker wages remained low, and most of the returns went to the owners of capital. Only by organizing into groups that had economic power (the ability to go on strike and halt production) and political power (the ability to influence the state’s regulation and enforcement behavior) were workers able to secure a greater share of the economic returns of industrialization. In resource curse economies, only a small number of well-compensated workers are required to sustain the main economic drivers, and the non-resource industry workers generally lack economic bargaining power. The owners of capital therefore need only be limited by political concerns, which lead them to redistribute the minimum amount of resource wealth required to establish sustainable political or military governing constituencies. If automation could perform a significant portion of current jobs at higher quality and lower cost, and if the displaced labor population lacked skills and the ability to retrain for any newly created job demand, a similar operative mechanism to the resource curse theory is plausible for heavily automated economies.

If true, advanced economies, including the United States and many of its allies, will face significant future challenges in maintaining good governance and political stability. Increasing instability among OECD countries could result in a wave of illiberalism and corruption among democracies. In the worst case, such a scenario might threaten the US-led system of democratic alliances and U.S. national security.

- A country with a significant lead in AI-enabled innovation technology develops a self-reinforcing technological and economic edge.

AI’s role as innovation-supercharger can deliver a strategic (and perhaps permanent) economic and military advantage to a country that develops a significant lead in exploiting AI applications. Because of this recursive-improvement property, and because AI applications also facilitate the automation of labor, it is possible to imagine a breakaway economic and innovation growth scenario, whereby a country develops a significant lead in developing certain AI applications, which then guarantee it will be the first to discover the next generation of innovations, and so on. In the most extreme scenario, one could imagine a small, technologically advanced country like Singapore developing an accelerating technological edge that facilitates extreme economic growth, far beyond what would normally be expected of a country with only five million people. This may sound implausible, but consider the fact that in 1900, Great Britain, a country of only 40 million people, came to control an empire with dominion over nearly 25% of the Earth’s land and population. Being the first to exploit a technological revolution can have outsized consequences. Likewise, this AI-enabled recursive-improvement scenario might result in one country acquiring radically superior military technology, especially in the domain of cyberweapons, where experiments and simulations can be run at digital speeds. - AI-enabled economic sabotage emerges as a new type of weapon.

As described herein the Information Superiority section, the 2015 AP twitter account hack led to major, though extremely brief, implications for the U.S. stock market. A more extreme version of this capability could be harnessed into a generalized economic weapon, intended to crash stock or other trading markets, or to disrupt the major digitally-connected means of production in an economy.[86] To some extent, this threat exists today due to cyberattacks, but AI capabilities might allow much smaller teams of non-nation state actors to launch such an attack and might also increase the scale of such an attack. In 2001, Enron, a corrupt energy company, deliberately shut down a power plant in California on false pretenses to raise energy prices and generate billions in excess profits. The crisis resulted in waves of blackouts across California.[87] An economic terrorist or nation-state adversary using AI-enhanced cyberweapons might replicate this sort of attack for either strategic military advantage or even just to make a profit by making calibrated investments ahead of time.

Part 2: Learning from Prior Transformative Technology Cases

Having summarized the mechanisms by which Artificial Intelligence might prove to be a transformative field for military technology, this section will summarize our analysis of prior transformative military technologies – Nuclear, Aerospace, Cyber, and Biotech – and thereafter generate lessons learned that apply to the management of AI technology. Our full analysis of these prior cases is included in the Appendix , but Part 2 will summarize this analysis and the lessons learned that we propose.

Key Technology Management Aspects

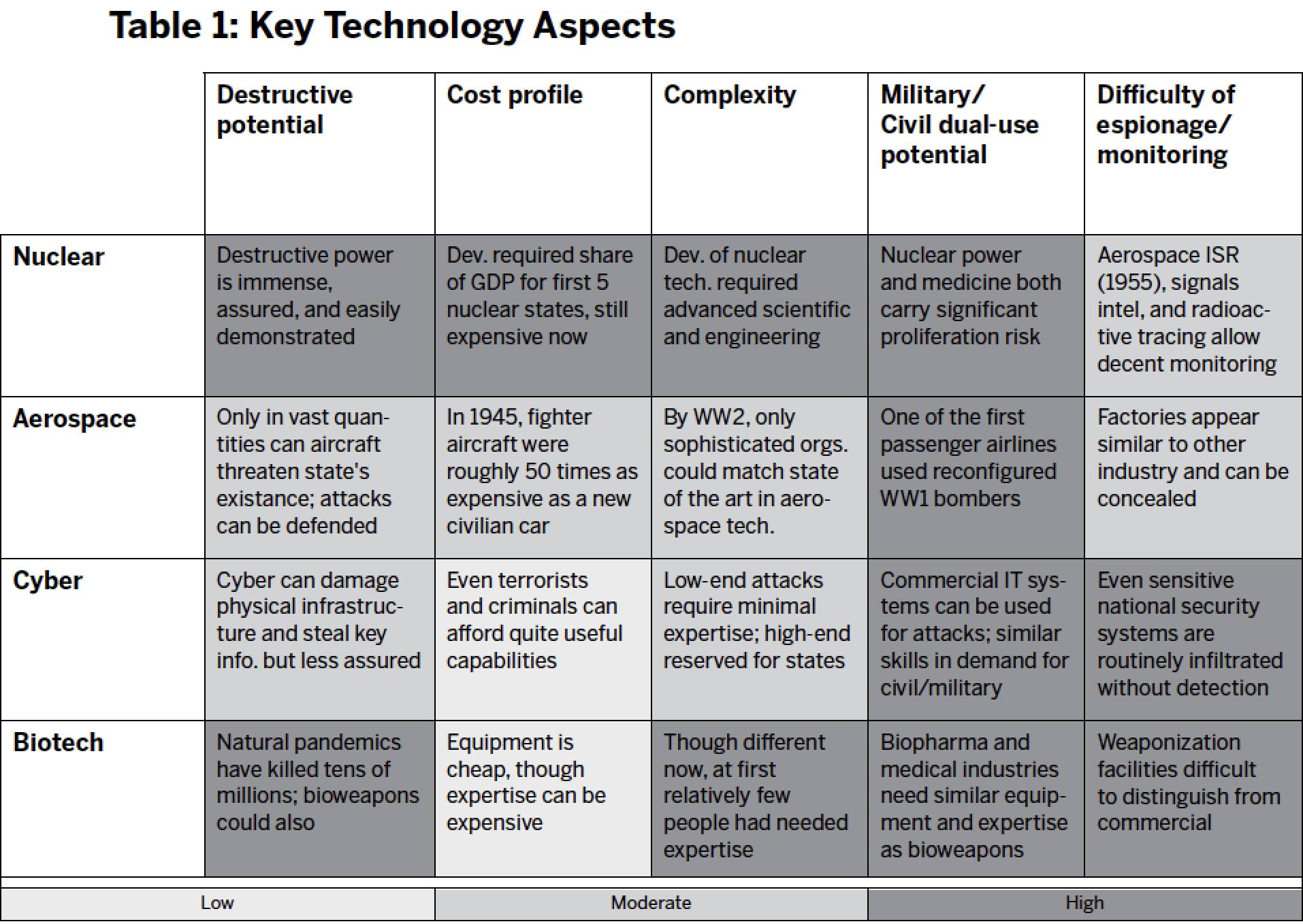

Though each of these technology cases were transformational for U.S. national security, they had different underlying scientific and economic conditions, which affected the optimal approach for the U.S. government to manage them. We evaluated each case across five different dimensions:

- Destructive potential: Using the technology, how much destruction can weapons cause? How easy is it to demonstrate the destructive potential? How assured is the destruction?

- Cost profile: What resources, and at what price, are required to develop the technology? What is the marginal cost of weapons production at scale? Does production require large fixed assets?

- Complexity Profile: What types of technical expertise are required to develop the technology? To use it after acquisition? Is this expertise primarily dependent on formal knowledge (e.g. mathematics) or tacit knowledge (e.g. manufacturing excellence)?

- Military/Civil dual-use potential: Does experience with commercial versions of the technology imply easy transitions to the military version? Do companies that produce in one sphere tend to also produce for the other? Do workers with skills from the commercial sector have relevant skills for the military sector?

- Difficulty of espionage and monitoring: Is it easy for adversaries to monitor the progress of a military development program? Is it easy for developers to hide their development, or portray it as commercially intended? Is the technology easily replicated or reverse-engineered?

Again, detailed justification for our technology management aspects is provided in the Appendix. Our summary of the technology profile for each case is presented in Table 1:

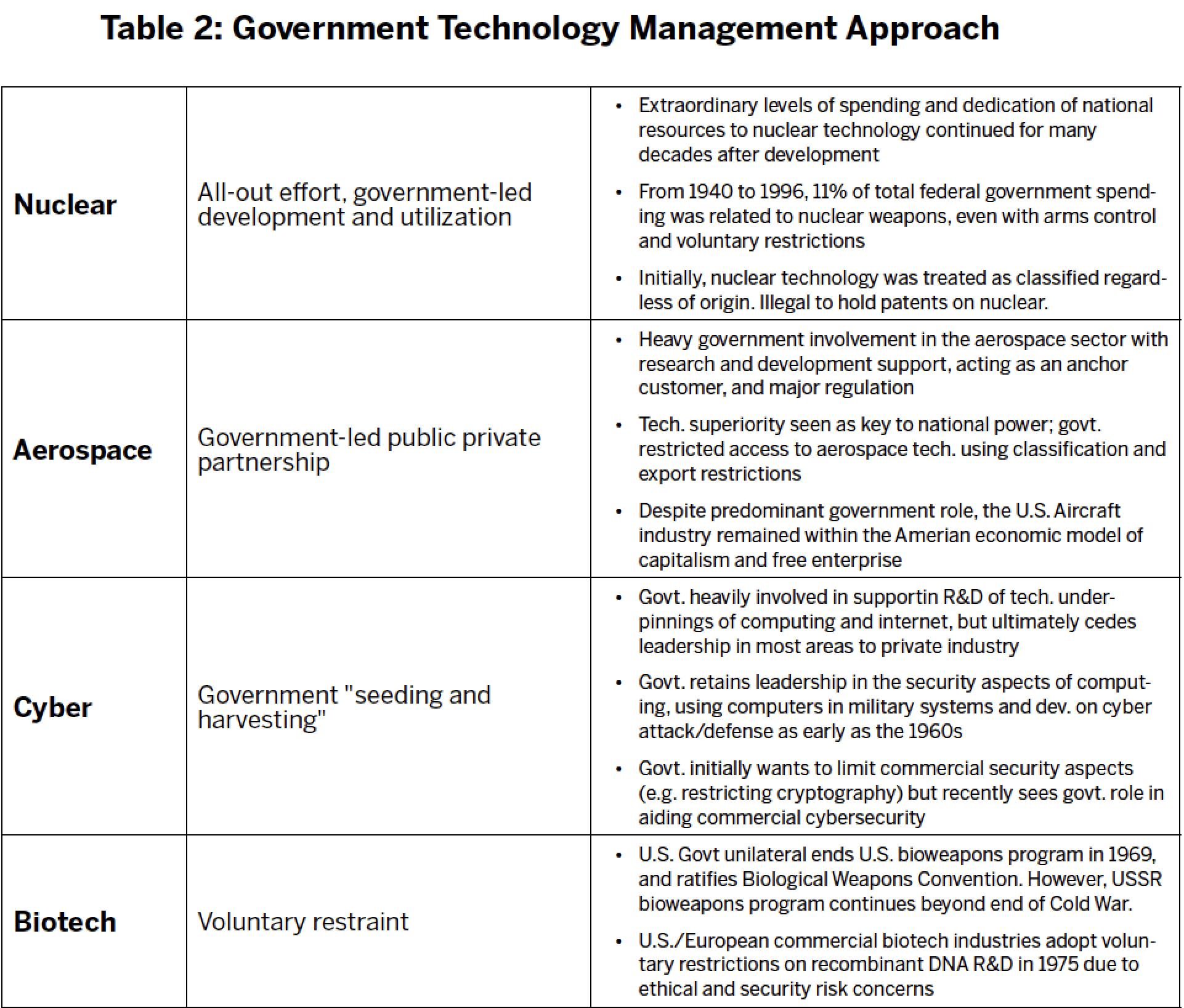

Government Technology Management Approach

In what is admittedly (and necessarily) a partial oversimplification, we have classified the U.S. government’s management paradigm for each of the four technologies. Our goal here is to clarify how government viewed the nature of the challenge – especially in its early decades – and characterize what approach they ultimately took to meet it. A more detailed justification of our analysis is provided in the Appendix. The four approaches are summarized in Table 2:

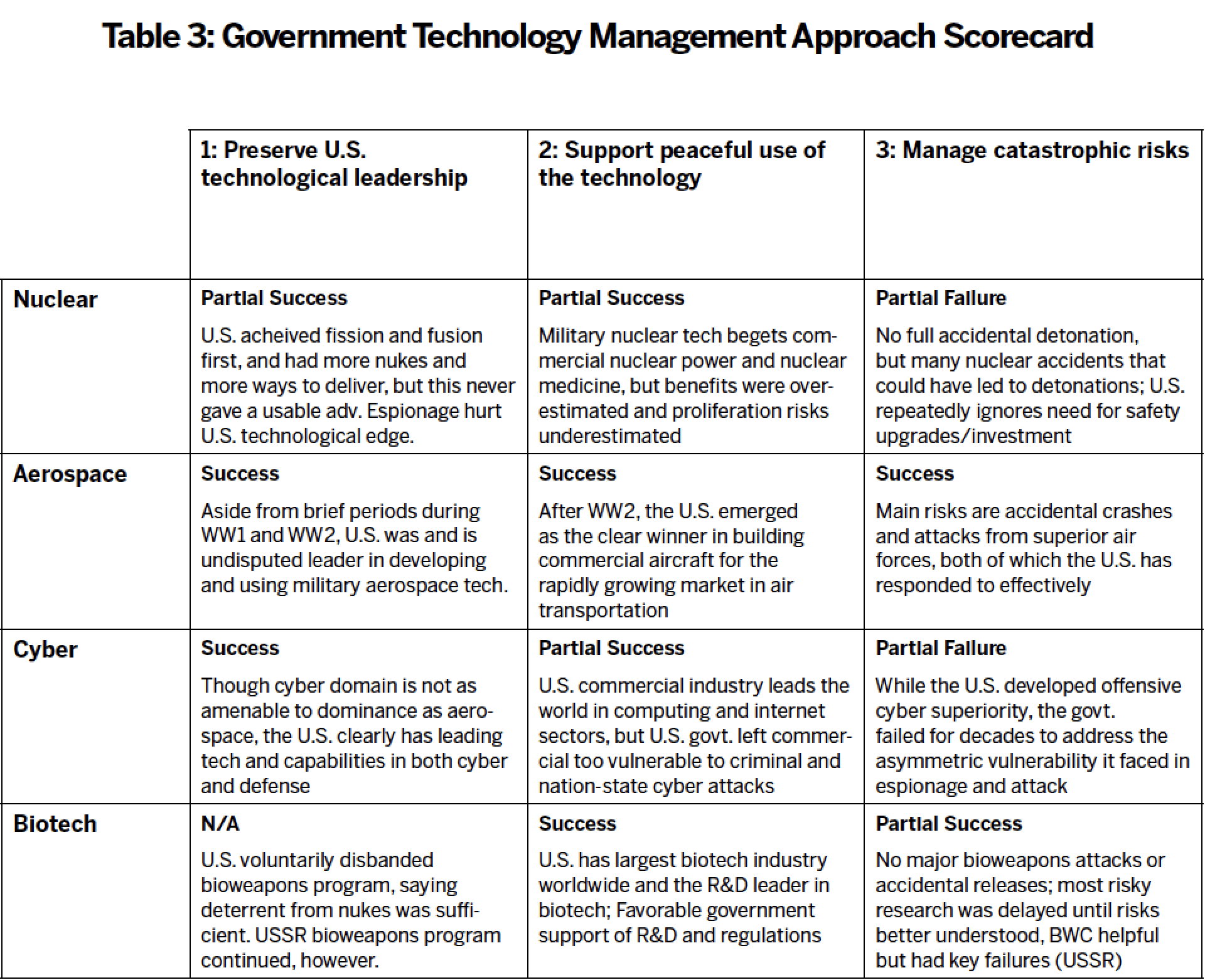

Government Management Approach “Scorecard”

Next, we evaluate the effectiveness of the government’s technology management approach for each of the four cases. Our evaluation is based upon our assessment of the government’s performance in meeting three key goals:

Our detailed justifications for the scorecard are provided in the Appendix. Our findings are summarized in Table 3.

AI Technology Profile: A Worst-case Scenario?

Comparing the technology profile of AI with the prior technology cases, we find that it has the potential to be a worst-case scenario. Proper precautions might alter this profile in the future, but current trends suggest a uniquely difficult challenge.

Destructive Potential: High

- At a minimum, AI will dramatically augment autonomous weapons and espionage capabilities and will represent a key aspect of future military power.

- Speculative but plausible hypotheses suggest that General AI and especially superintelligence systems pose a potentially existential threat to humanity.[88] [N]

Cost Profile: Diverse, but potentially low

- Developing cutting-edge capabilities in machine learning and AI can be expensive: many firms are spending billions or hundreds of millions of dollars on R&D.

- However, relatively small teams can leverage open-source code libraries and COTS or rented hardware to develop powerful capabilities for less than $1 million; leaked copies of AI software might be virtually free.

Complexity Profile: Diverse, but potentially low

- Advancing the state of the art in AI basic research requires world-class talent, of which there is a very limited pool.

- However, applying existing AI research to specific problems can sometimes be relatively straightforward and accomplished with less elite talent.

- Technical expertise required for converting commercially available AI capabilities into military systems is currently high, but this may decline in the future as AI improves.

Military/Civil Dual-Use Potential: High

- Militaries and commercial businesses are competing for essentially the exact same talent pool and using highly similar hardware infrastructure.

- Some military applications (e.g. autonomous weapons) require additional access to non-AI related expertise to deliver capability.

Difficulty of Espionage and Monitoring: High

- Overlap between commercial and military technology makes it difficult to distinguish which AI activities are potentially hostile.

- Few if any physical markers of AI development exist.

- Total number of actors developing and fielding advanced AI systems will be significantly higher than nuclear or even aerospace.

- Monitors will find it difficult to assess AI aspects of any weapons without direct access.

Lessons Learned

Having provided our observations of previous cases, we will now attempt to summarize lessons learned. We recognize that there are vast differences of time, technology, and context between these cases and AI. This is our effort to characterize some lessons which endure nevertheless.

Lesson #1: Radical technology change begets

radical government policy ideas

The transformative implications of nuclear weapons technology, combined with the Cold War context, led the U.S. government to consider some extraordinary policy measures, including but not limited to the following:

- Enacted – Giving one individual sole authority to start nuclear war: The United States President, as head of government and commander in chief of the military, was invested with supreme authority regarding nuclear weapons[89]

- Considered – Internationalizing control of nuclear weapons under the exclusive authority of the United Nations in a collective security arrangement [O] [90]

- Enacted – Voluntarily sharing atomic weapons technology with allies (which occurred) and adversaries including the Soviet Union (which did not)[91]

- Considered – Atomic annihilation: Pre-emptive and/or retaliatory atomic annihilation of adversaries, which could have resulted in millions or even billions of deaths[P]

- Enacted – Voluntarily restricting development in arms control frameworks to ban certain classes of nuclear weapons and certain classes of nuclear tests

The world has lived with some of these policies for seven decades, so the true extent of their radicalism (at the time they were first considered) is hard to convey. The first example is perhaps the easiest, because it required passage of the Presidential Succession Act of 1947, which laid the foundation for the 25th Amendment to the United States Constitution. Though there were other proximate causes for the 25th Amendment, such as the assassination of President Kennedy, it is only a mild stretch to say that the invention of nuclear weapons was so significant that it led to a change in the United States Constitution.