Is your face gay? Conservative? Criminal? AI researchers are asking the wrong questions

By Trenton W. Ford | May 20, 2022

An illustration of facial recognition. Credit: Gerd Altmann/Pixabay.

An illustration of facial recognition. Credit: Gerd Altmann/Pixabay.

Artificial intelligence (AI) offers the promise of a world made more convenient by algorithms designed to mimic human cognitive abilities. In a story we tell ourselves about progress, AI systems will perform tasks that improve our lives and handle more of our work, so we can spend our time on more enjoyable or productive pursuits. The true story of AI, however, is being told through the questions researchers are using it to answer. And unfortunately, some are not pursuing ideas likely to advance a utopian vision of the future. Instead, they’re asking questions that risk re-creating flawed human thinking of the past.

Troublingly, some modern AI applications are delving into physiognomy, a set of pseudoscientific ideas that first appeared thousands of years ago with the ancient Greeks. In antiquity, Pythagoras, the Greek mathematician, based his decisions on accepting students on whether they “looked” gifted. To the philosopher Aristotle, bulbous noses denoted an insensitive person and round faces signaled courage. Some ancient Greeks, at least, thought that if someone’s facial features resembled a lion, that person would have lion-like traits such as courage, strength, or dignity.

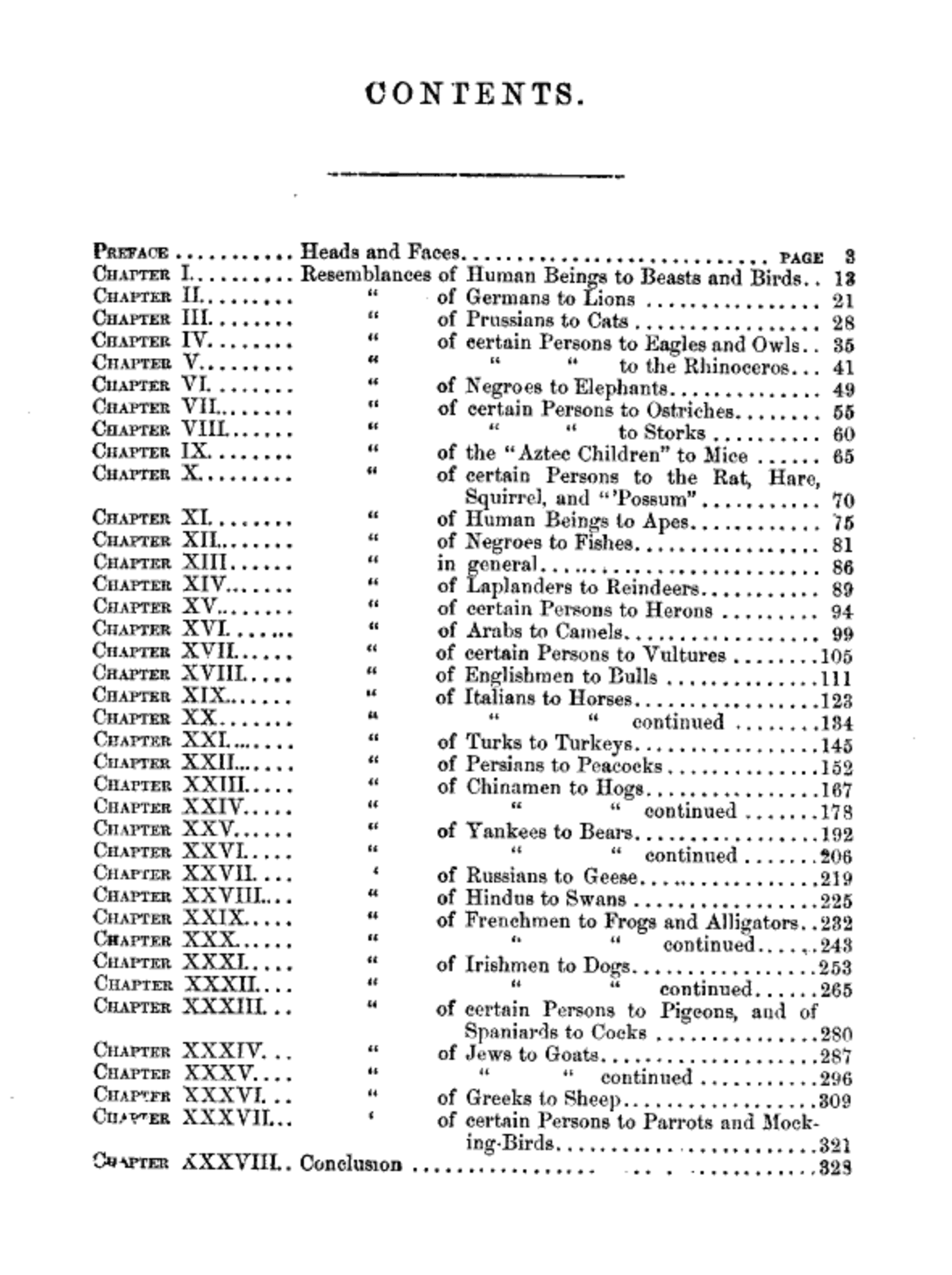

During a period of peak popularity in the 19th Century, the field of physiognomy became more sinister and racist than its quirky manifestation during the time of ancient Greeks. In the 1852 book, Comparative Physiognomy: Or Resemblances Between Men and Animals, author James W. Redfield, a doctor, makes the case for the usefulness of physiognomy by in each chapter relating an animal to a region or race of people. In one chapter, he compared the character of Middle Easterners to camels. In another, he compared Chinese people to pigs. Redfield, and other authors at the time, were using supposed science to reinforce their biases, and, for a time, their claims were considered science.

There are such things as bad questions. These days, AI researchers are asking troubling questions of technology and attempting to use it to link facial attributes to fundamental character. While they are not saying “yippee, look what AI can do” (in fact, some say they are trying to highlight risks), by publishing in scientific journals, they are risking lending credibility to the very idea of using AI in problematic, physiognomic ways. Recent research has tried to show that political leaning, sexuality, and criminality can be inferred from pictures of people’s faces.

Political orientation. In a 2021 article in Nature Scientific Reports, Stanford researcher Michal Kosinski found that using open-source code and publicly available data and facial images, facial recognition technology can judge a persons’ political orientation accurately 68 percent of the time even when controlling demographic factors. In this research, the primary algorithm learned the average face for conservatives and liberals and then predicted the political leanings of unknown faces by comparing them to the reference images. Kosinski wrote that his findings about AI’s abilities have grave implications: “The privacy threats posed by facial recognition technology are, in many ways, unprecedented.”

While the questions behind this line of inquiry may not immediately trigger an alarm, the underlying premise still fits squarely within physiognomy, predicting personality traits from face features.

Sexuality. In 2017, Kosinski published another work showing that a neural network trained on facial images could be used to distinguish between gay and straight people. Surprisingly, the experiments using the system showed an accuracy of 81 percent for men and 74 percent in women. Based on these results, the machine learning model performed well, but what was the value of the question the study asked? Often, inferring people’s sexual orientation is used for purposes of discrimination or criminal prosecution. In fact, there are still more than 50 countries with laws against same-sex sexual acts on the books, with severity of punishment ranging from imprisonment to death. In this instance, improving accuracy of AI tools in applications like these only magnifies the likely resultant harm. This isn’t even to speak of the instances where the tool’s predictions are incorrect. According to the BBC, organizations representing the LGBTQ community were strongly critical of Kosinski and his colleague’s research, calling it “junk science.” As with the study on political leanings, the authors argued that their study highlights the risk that facial recognition technology might be misused.

Criminality. AI, like humans, attempts to predict future events from historical data. Moreover, just like humans, if the historical events that are shown to the AI predictor are biased, then the resulting predictions would contain artifacts of those biases. The easiest example of this is in attempting to infer criminality from facial images. In the United States, many studies have analyzed racial disparities in the judicial system. These studies consistently highlight that racial minorities are many times more likely to be both arrested and incarcerated for crimes—even when white people are committing these crimes at the same rate. If an AI were trained to predict criminality on historical data collected from the United States, it would invariably find that being male and having darker skin is the best predictor for criminality. It would learn the same biases that have historically plagued the judicial system.

In 2020, as researchers at Harrisburg University were preparing to publish a paper about using face images to predict criminality, hundreds of academics signed a letter to Springer Publishing urging the company to rescind the offer to publish. Alongside methodological concerns, their criticisms focused on the ethical dubiousness of the research and the question it attempted to answer. And in the end, the company announced that it wouldn’t move forward with the study. In this instance, along with the obvious dangers of predicting criminality before it occurs, a confounding factor calls into question all of the study’s assumptions: Training data used to predict criminality will train an AI model that reflects the biases of current judicial systems.

The algorithmic tools behind these studies are of course more advanced than Aristotle’s methods, but the questions these studies ask are just as problematic as the ancient Greeks’. In asking whether sexuality, criminality, or political views can be inferred from a person’s face, and showing that AI systems can indeed deliver predictions, practitioners are lending credibility to the idea of using algorithms to perpetuate biases. If governments or others were to follow up on these lines of research and utilize algorithms as the researchers did in these studies, the results would likely be facial recognition used to discriminate or oppress groups of people.

The road to the AI-enabled utopia that optimists have predicted is looking increasingly full of pitfalls, including avenues of research that scientists ought not to pursue but do, even when they argue they are simply highlighting the risks of AI technology. AI has the same fundamental limitation that all tools do: Flawed AI systems, like flawed humans, can answer questions that an ethical society would shun. It is up to us to push back against the misapplication of AI that serves to automate biases.

Together, we make the world safer.

The Bulletin elevates expert voices above the noise. But as an independent nonprofit organization, our operations depend on the support of readers like you. Help us continue to deliver quality journalism that holds leaders accountable. Your support of our work at any level is important. In return, we promise our coverage will be understandable, influential, vigilant, solution-oriented, and fair-minded. Together we can make a difference.

Keywords: artificial intelligence, bias

Topics: Disruptive Technologies

I agree with criticism of the potential conclusions drawn from the research but I don’t agree the research itself is unethical. If an AI system can predict an association with a level of accuracy significantly more than just chance, then exploring why that might be is worthy research simply for curiosity alone. We shouldn’t avoid knowledge for fear of how it might be misused if indeed it represents genuine knowledge and if you can predict something more than chance then there is at least something to be known there. How that knowledge is misused (e.g. by government, employers etc.) is… Read more »