There’s a ‘ChatGPT’ for biology. What could go wrong?

By Sean Ekins, Filippa Lentzos, Max Brackmann, Cédric Invernizzi | March 24, 2023

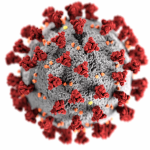

In recent months ChatGPT and other AI chatbots with uncanny abilities to respond to prompts with fluid, human-like writing have unleashed torrents of angst flowing from different quarters of society; the chatbots could help students cheat, encroach on jobs, or mass produce disinformation. Outside of the spotlight shining on the chatbots, researchers in the life sciences have also been rolling out similar artificial intelligence-driven technology, but to much less fanfare. That’s concerning, because new algorithms for protein design, while potentially advancing the ability to fight disease, may also create significant opportunities for misuse.

As biotech production processes are evolving to make it easier for creators to make the synthetic DNA and other products they’ve designed, new AI models like ProtGPT2 and ProGen will allow researchers to conceive of a far greater range of molecules and proteins than ever. Nature took millions of years to design proteins. AI can generate meaningful protein sequences in seconds. While there are good reasons to develop AI technology for biological design, there are also risks to such efforts that scientists in the field don’t appear to have weighed. AI could be used to design new bioweapons or toxins that can’t be detected. As these systems develop alongside new easier, cheaper, and faster production capabilities, scientists should talk to and learn from peers who focus on biosecurity risks.

What’s the concern with engineered proteins? While many applications of protein engineering are beneficial, protein toxin-based weapons have long been a concern. These are poisons created by organisms, like plants or fungi. Ricin, for example, a toxin made from castor beans, was likely used by Bulgarian agents in London in 1978 in the well-documented umbrella-assassination of Georgi Markov, a Bulgarian dissident. Another example is the botulinum neurotoxin; it’s 100,000 times more toxic than the nerve agent sarin and has been a staple of state bioweapons arsenals. If the technology for developing and producing protein toxins improved, say by making it easier to design novel toxins or improving on existing ones, that could pose a high risk.

The authors of an early paper on the risks of protein engineering, the field involved with designing and making proteins, noted in 2006 that manufacturing toxins in larger amounts was becoming more feasible. Instead of having to extract toxins from their sources, new technologies meant they could be produced in bacteria or other cells. Biosecurity experts Jonathan Tucker and Craig Hooper were particularly concerned with the growing medical interest in so-called “fusion toxins,” which could combine elements of a toxin like ricin with antibodies that could zero in on cancer cells. Along with cancer cells, such a technology could potentially be turned to disrupt other healthy cells. While protein engineering was making strides, the authors saw it as an area of dual-use risk “often overlooked” by the biosecurity community.

One thing limiting those risks, Tucker and Hooper said, was a lack of computing power to design new proteins. Some 17 years later, with the advent of AI-based protein design and much more computing power, this limitation seems to have eroded.

Why generate new proteins? AI-assisted protein design is a potentially revolutionary area in the life sciences. Proteins are the molecular machines of life. Humans and other species produce thousands of these complex molecules, which perform a wide array of critical functions. These include enabling your muscles to move, letting molecules in and out of cells, and breaking down the nutrients in food and drink. Where evolution has taken hundreds of millions of years or longer to perfect proteins, scientists can now “train” an AI, using hundreds of millions of protein sequences as examples, to do that much more rapidly.

But why would anyone want to make new proteins? Imagine a natural protein enzyme that catalyzes a chemical reaction useful for making a drug. An AI could conjure up an enzyme that makes the valuable product faster. Or it could come up with a slightly modified protein-based drug, one with improved qualities. Perhaps one day, AI could be useful in developing proteins with completely new reactions. Researchers could design proteins that specifically bind to and inhibit human proteins in order to correct a genetic disease. Or proteins that neutralize bacteria or viruses when they bind to them. Or proteins that break down pollutants. The commercial applications of generative protein design are potentially infinite.

What are the risks of AI-designed proteins? AI language models for protein design are being developed rapidly. ProtGPT2, for example, has been trained on 45 million protein sequences, ProGen on 280 million sequences. Both were described in recent articles published since 2022. The scientists involved in the models used their software to design new proteins which were then made and tested to verify that they were indeed functionally competent. The models are generating direct comparisons to the groundbreaking nature of the ChatGPT and its recent predecessors. In late January, Ali Madani, part of the team that developed ProGen, tweeted, “ChatGPT for biology? Excited to share our work on [AI models] for protein design out today.” Inspired in part by AI text generators, the researchers behind ProtGPT2 wrote, “we wondered whether we could train a generative model to…effectively learn the protein language….”

Life scientists are not naïve to the security dimensions of their trade. Although few would want their science to be used to deliberately cause harm, many, it seems, don’t fully appreciate how such risks could apply to their own areas of research. Protein designers are no different. At least there is no evidence in the papers or preprints on ProtGPT2 and ProGen that any such consideration is taking place. Protein designers also make software tools readily available, depositing them in widely accessible repositories, seemingly without considering potential security concerns. That’s a problem. New proteins may circumvent biodefenses and other controls.

Take for example the active site of ricin, the plant protein toxin. Ricin can inactivate ribosomes, the cellular structures that assemble proteins. In other words, it’s a dangerous poison. A protein engineering AI could, say, re-design the protein structure around ricin’s active site, potentially removing any sequence similarity to ricin. Anyone working on toxin detection technologies—biodefense or food security labs, for example—might find it difficult to identify new, unseen toxins. Established systems to detect and prevent the illegal export of toxins would not recognize them. While, fortunately, the activity of ricin depends on a combination of factors, and changing the surrounding protein structure will not necessarily on its own hamper detection and control, advances in gene synthesis, protein expression and purification techniques are adding speed and ease to developing computer-designed proteins. These will likely increase the risk of misuse.

Making AI-designed proteins. Designing a molecule or protein that performs a certain task may seem innocuous, but couple that capability with technology to actually make the product, and we enter a different realm. Could that actually happen? Well, there already have been advances in the field of robotic chemistry that create an integrated pipeline that designs and makes small molecules. While expanding this concept to the automated design and synthesis of larger molecules like proteins would be a big technological leap, recent developments in protein synthesis are enabling the small-scale, high-throughput production of some AI-created proteins. And biofoundries—cutting-edge facilities for the design and production of molecular biological products—are enabling the creation of other proteins. All that is needed is for the new protein design algorithms to be integrated with those production processes.

For us, this is a déjà vu moment. Previously, we stumbled upon the power of using generative AI to develop small molecule analogs of VX nerve agent, a powerful chemical weapon. Switch the settings on a drug-discovery AI, and instead of nontoxic molecules, the software will deliver the most toxic “drugs.” The generative protein design field offers immediate parallels, yet there is little recognition in the field for the need to address the potential dual-use risk of the technology. Proteins can come with much more 3D-complexity than the chemical compounds that make up many drugs, and it might be harder to “see” the nefarious purpose of newly designed proteins/enzymes. Indeed, they may not be comparable to anything known. But responsible science still demands consideration of the alternate uses the science can be put to, other than those intended.

One way to address potential misuse is to follow a simple code: stop, look, and listen. If you are developing a new generative AI technology or application, stop and consider the potential for dual-use. Look at what other scientists have done to address this in their software. And listen when other scientists warn you of the potential for your technology to be misused. Failure to take such precautions could lead to the continuous repetition of teachable moments in the misuse of artificial intelligence—or far worse consequences.

Beyond even the substantial risks of protein generation, there is unprecedented potential to use generative AI to develop new and intrusive forms of surveillance, fueled by biological and genomic data; extremely toxic molecules; and even ultra-targeted biological weapons. The transformative power of the combination of AI and life science could lead humanity down an irreversible path, with unrivalled possibilities for coercive and lethal interference in life’s most intimate processes. Space must be opened up for conversations in both the international security community and in the life science community, as well as between these communities, on what safe, secure and responsible life science research involving AI might be.

Together, we make the world safer.

The Bulletin elevates expert voices above the noise. But as an independent nonprofit organization, our operations depend on the support of readers like you. Help us continue to deliver quality journalism that holds leaders accountable. Your support of our work at any level is important. In return, we promise our coverage will be understandable, influential, vigilant, solution-oriented, and fair-minded. Together we can make a difference.

Keywords: AI, ChatGPT, toxins

Topics: Biosecurity

Share: [addthis tool="addthis_inline_share_toolbox"]