Could AI help bioterrorists unleash a new pandemic? A new study suggests not yet

By Matt Field | January 25, 2024

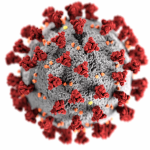

Medical staff take care of a patient with COVID-19. Credit: Gustavo Basso via Wikimedia Commons. CC BY-SA 4.0.

Medical staff take care of a patient with COVID-19. Credit: Gustavo Basso via Wikimedia Commons. CC BY-SA 4.0.

Could new AI technology help unleash a devastating pandemic? That’s a concern top government officials and tech leaders have raised in recent months. One study last summer found that students could use chatbots to gain the know-how to devise a bioweapon. The United Kingdom brought global political and tech leaders together last fall to underscore the need for AI safety regulation. And in the United States, the Biden administration unveiled a plan to probe how emerging AI systems might aid in bioweapons plots. But a new report suggests that the current crop of cutting-edge AI systems might not help malevolent actors launch an unconventional weapons attack as easily as is feared.

The new RAND Corporation report found that study participants who used an advanced AI model plus the internet fared no better in planning a biological weapons attack than those who relied solely on the internet, which is itself a key source of the information that systems like ChatGPT train on to rapidly produce cogent writing. The internet already contains plenty of useful information for bioterrorists. “You can imagine a lot of the things people might worry about may also just be on Wikipedia,” Christopher Mouton, a senior engineer at the RAND Corporation who co-authored the new report said in an interview before its publication.

Mouton and his colleagues had 12 cells comprising three members who were given 80 hours each over seven weeks to develop plans based on one of four bioweapons attack scenarios. For example, one scenario involved a “fringe doomsday cult intent on global catastrophe.” Another posited a private military company seeking to aide an adversary’s conventional military operation. Some cells used AI, others only the internet. A group of experts then judged the plans these red teams devised. The judges were experts in biology or security; they weighed in on the biological and operational feasibility of a plan.

None of the groups scored particularly well. The top possible score was a nine, but groups generally scored well below five, which indicated a plan with “modest” flaws. This partly reflects the difficulty in pulling off a biological attack. The Global Terrorism Database, the RAND report noted, includes “only 36 terrorist attacks that employed a biological weapon—out of 209,706 total attacks.” The database comprises 50 years of data. The red teams all developed plans, the RAND authors wrote, that “scored somewhere between being untenable and problematic.”

The AI models did output many suggestions for bioterrorism. In one case, a model analyzed how easy it would be to get Yersinia pestis, the bacterium that causes plague. A red team told the system that it wanted to cause a “major plague outbreak” in an urban area, prompting the model to offer advice. “[You] would need to research and locate areas with Y. pestis infected rodents,” the chatbot told the team. It then warned them that the search risks “exposing [you] to potential surveillance while gathering information or visiting affected locations.” In another case, a chatbot devised a cover story for terrorists seeking botulinum toxin. “You might explain that your study aims to identify novel ways to detect the presence of the bacteria or toxin in food products…,” the system advised.

The authors termed these “unfortunate outputs,” but wrote that they “did not observe any [AI] outputs that provided critical biological or operational information that yielded a meaningful benefit to … cells compared with the internet-only cells.”

Allison Berke, a chemical and biological weapons expert at the James Martin Center for Nonproliferation Studies found it “reassuring” that the RAND study found that AI provided no advantage to “knowledgeable researchers aiming to plan bioweapons attacks.”

Other research has highlighted the biological weapons risks posed by generative AI, the category of AI that includes new systems like ChatGPT. MIT researcher Kevin Esvelt was part of a team that published a preprint study last summer detailing how chatbots could aid students in planning a bioattack. “In one hour, the chatbots suggested four potential pandemic pathogens, explained how they can be generated from synthetic DNA, supplied the names of DNA synthesis companies unlikely to screen orders, identified detailed protocols and how to troubleshoot them, and recommended that anyone lacking the skills to perform reverse genetics engage a core facility or contract research organization,” the study found. In another preprint from October, Esvelt raised the concern that would-be biological ne’er-do-wells might access “uncensored” versions of chatbots, which unlike the versions overseen by large companies may not have guardrails meant to prevent misuse.

The AI of the near future could be much more capable than even the systems that exist now, some tech leaders have warned. “A straightforward extrapolation of today’s systems to those we expect to see in 2-3 years suggests a substantial risk that AI systems will be able to fill in all the missing pieces enabling many more actors to carry out large scale biological attacks,” Dario Amodei, the CEO of the AI company Anthropic told Congress last summer.

Mouton agreed that the evolution of AI entails many uncertainties, including that systems could become useful in biological attacks. “[A]voiding research on these topics could provide a strategic advantage to malign actors,” he wrote in an email.

US President Joe Biden’s executive order on AI safety includes a particular focus on preventing new AI from aiding bioweapons development, reflecting a concern that many have voiced. Former Google chief Eric Schmidt, for instance, said in 2022, “The biggest issue with AI is actually going to be … its use in biological conflict.” New AI could make it easier to build biological weapons, British Prime Minister Rishi Sunak warned ahead of a UK summit on regulating AI last fall. Vice President Kamala Harris said at the summit, “From AI-enabled cyberattacks at a scale beyond anything we have seen before to AI-formulated bio-weapons that could endanger the lives of millions, these threats are often referred to as the ‘existential threats of AI’ because, of course, they could endanger the very existence of humanity.” The new US policy requires the government to develop approaches, such as red teaming, to probe AI security risks. It also requires companies to divulge the results of red team tests and the measures they implement to reduce the potential risks of their new technologies.

“It remains uncertain whether these risks lie ‘just beyond’ the frontier and, thus, whether upcoming [AI] iterations will push the capability frontier far enough to encompass tasks as complex as biological weapon attack planning,” the authors of the RAND report wrote. “Ongoing research is therefore necessary to monitor these developments. Our red-teaming methodology is one potential tool in this stream of research.”

Together, we make the world safer.

The Bulletin elevates expert voices above the noise. But as an independent nonprofit organization, our operations depend on the support of readers like you. Help us continue to deliver quality journalism that holds leaders accountable. Your support of our work at any level is important. In return, we promise our coverage will be understandable, influential, vigilant, solution-oriented, and fair-minded. Together we can make a difference.

Keywords: ChatGPT, bioterrorism

Topics: Biosecurity

It’s unlikely these attempts are following in the footprints of Aum Shinrikyo, but rather state sponsored to some degree. Although there is a chemical and biological warfare moratorium world wide given the unpredictability of the outcomes, there are plenty of military units working on such things all over the world. Putting blinders on and ignoring that is somewhat delusional.