From fission to fusion: the need for a quick transition

By Jason Parisi | August 19, 2014

When the first atomic bomb test, code-named “Project Trinity,” was conducted on July 16, 1945, civilization moved from the chemical era—during which atomic energy was outsourced to the sun—to the nuclear era, when induced atomic reactions on Earth could produce energy. Humanity’s relationship with the atom may be about to change again, into an age of controlled nuclear fusion for electricity generation.

If handled skillfully and with sufficient political will, fusion could reduce the threat of both nuclear weapons proliferation and climate change, the two risks that academic Noam Chomsky claims have the greatest chance of ending our very existence in the 21st century. But the transition from fission-generated electricity to fusion will be precarious if appropriate safeguards are not taken.

A new energy source is clearly needed, considering the five- to seven-fold increase in electrical demand predicted to occur between the years 2000 and 2100 and the potentially devastating impacts of man-made climate change caused by consuming fossil fuels to meet this demand. To overcome these challenges, nuclear energy may be part of the solution—however reluctant society may be to consider it.

When we think of nuclear energy, we have an overwhelming tendency to think of fission. There may be an even better source of nuclear energy, however, in the form of fission’s close cousin: fusion.

Nuclear fusion could come into play as soon as 2050, depending upon funding, the success of upcoming fusion experiments, and the viability of other alternatives, says a position paper published by the European Fusion Development Agreement in November 2012. And considering the problems involved with fission, the sooner we move to fusion, the better.

Fusion benefits regarding proliferation. In the most likely nuclear fusion reaction contemplated for use in energy production, atoms of two isotopes of hydrogen join, creating a helium isotope and throwing off energy. (In comparison, fission generates energy by splitting a heavy atom into several lighter atoms.)

A reactor using fusion to generate electricity is intrinsically safe: First, a runaway nuclear chain reaction cannot take place, under any circumstances. Second, no long-lived, highly radioactive products are created. Third, of those magnetic confinement fusion reactors that will require radioactive fuels such as tritium, both the radioactive fuel requirements and fuel half-life are orders of magnitude lower than their fission counterparts.

In contrast, a fission reactor is inherently more dangerous: If its safety systems fail, it can undergo a fatal chain reaction; significant quantities of spent, radioactive fuel are produced; and the re-fuelling and disposal of waste requires that highly radioactive materials be transported.

Another bonus of fusion power is that there is enough raw material on Earth to supply the needs of fusion reactors for hundreds of thousands—if not millions—of years at current consumption levels, according to David Mackay, professor of engineering at Cambridge University and author of Sustainable Energy.

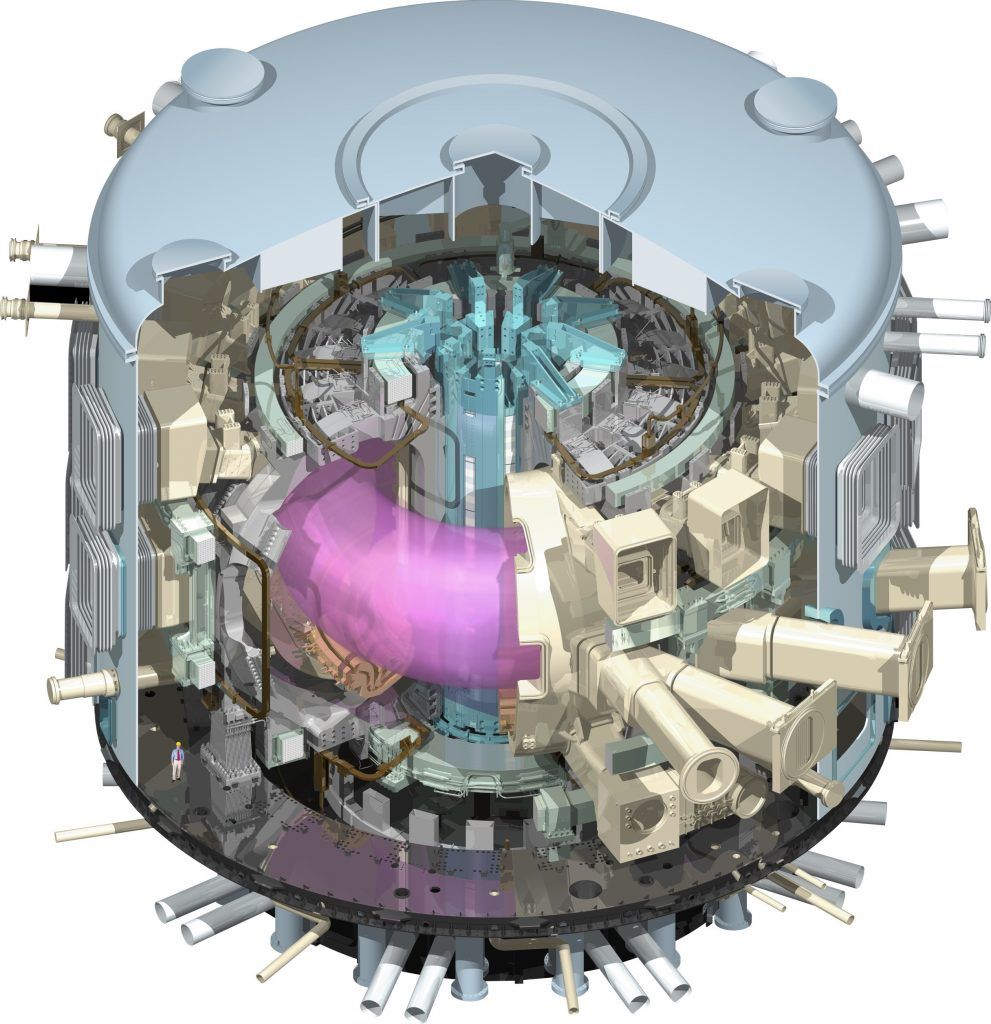

With these potential benefits in mind, there have been significant research and development efforts on fusion power since the 1950s. At present, the device most widely backed by physicists and engineers is the tokamak, a toroidal (doughnut-shaped) vacuum chamber that uses magnetic fields to confine plasma inside the device. This plasma is heated up to very high temperatures, giving the atoms within enough velocity to overcome the forces of electric coulomb repulsion and fuse together, releasing energy in the process. First-generation tokamak reactors generating electricity for the grid will use the heavy hydrogen isotopes of deuterium and tritium as fuels; deuterium is abundant in nature and stable, while tritium is extremely rare and radioactive, with a half-life of 12 years.

Decades of tokamak research has led to the construction of the International Thermonuclear Experimental Reactor, or ITER, in southeastern France. This international collaboration, with an estimated €13 billion ($18.9 billion) in construction costs, is designed to prove once and for all the feasibility of the tokamak design for energy generation. While there are other contenders, including stellarators (mechanisms in a figure-eight shape that control plasmas via magnetic confinement, much like a tokamak), and inertial confinement devices (mechanisms that compress and heat fuel, typically by using lasers), the tokamak probably most closely fits the bill for a first-generation, commercially viable fusion reactor.

Not that other areas of research are standing still. Investigators announced a major advance in fusion research in the February 12, 2014 issue of the journal Nature, when the National Ignition Facility used a powerful assembly of lasers to extract more energy from a controlled fusion reaction than was absorbed by the fuel to trigger it, an important symbolic milestone. But because this fusion reaction only showed a minimal net gain—it released about one percent of the total energy required to power the lasers that caused the reaction—laser inertial confinement fusion still needs to make profound scientific and technological advances before becoming commercially viable. In contrast, the world’s largest operating tokamak, the Joint European Torus, or JET, is already close to producing as much energy as is put in. Meanwhile, ITER is expected to produce 10 times as much energy as is put into it.

Fusion and weapons. From the standpoint of controlling nuclear weapons proliferation, the tokamak has several important plusses.

First, tokamak reactor cores are surrounded by a “lithium blanket,” which absorbs escaping neutrons to breed more tritium for use as reactor fuel. While this approach could theoretically be used to enrich fuel to weapons grade level by inserting thorium or uranium, researchers Robert J. Goldston and Alexander Glaser concluded that it would not be realistic for anyone to do so surreptitiously. This means that in a fusion-only era, any “horizontal proliferation”—the process by which non-nuclear states or entities obtain nuclear weapons—would become significantly harder. While in theory pure-fusion weapons could be created that release a lethal neutron dose within a radius of several hundred meters, this has not been achieved in practice.

Second, the tokamak device itself inhabits only a small part of an entire tokamak reactor site. A large number of non-nuclear external systems are required to run it, such as vacuum systems, cryogenics, and power supplies; to fit them in, the ITER “platform” housing all the scientific apparatus is 42 hectares in size (approximately 104 acres)—a landmass more than five times larger than New York City’s Rockefeller Center. The sheer geographic size of a tokamak reactor complex drastically lowers the possibility of any clandestine fusion plant construction, Goldston and Glaser say.

What’s more, once a fusion plant has been built, the detection of any weapons-grade fissile material produced there should be simple. By monitoring the lithium blanket for traces of fissile material, it should be easy to detect if a fusion plant is diverting some of its material to illegal weapons enrichment. Most likely, only a very small amount could be enriched, hardly enough for making a bomb, before attracting attention.

If such illicit activity is detected, disabling a tokamak would be relatively straightforward, due to that vast array of essential support systems. Even if the entire fusion reactor had to be destroyed—by dropping conventional bombs on it, for example—the radioactive fallout would be negligible, due to the low levels of fuel in the reactor at any given time (just a few grams). The worst damage would come from the destruction of the tritium storage system, which would release two-to-four kilograms of tritium—a substance with a 12.3-year half-life.

Because of these factors, it would be relatively easy to quickly and safely disable a fusion facility and eliminate any nuclear weapons grade materials located on site. Therefore, in a world powered only by fusion, it would be significantly harder to clandestinely enrich fissile material, putting the brakes on nuclear proliferation. (This is based on the assumption that no new clandestine enrichment technologies come onboard by that time; while centrifuges are fairly easy to detect, laser enrichment, for example, may be harder to monitor.)

Transition risks. The period during which both fission and fusion plants coexist could be dangerous, however. Just a few grams of deuterium and tritium are needed to increase the yield of a fission bomb, in a process known as “boosting.”

Because a full-sized fusion reactor would use about 250 kilograms of fuel per year that is half tritium and half deuterium, this would significantly increase the amount of material available for such activities. Assuming that a one-gigawatt fusion plant uses 125 kilograms of tritium per year, and allowing for a very conservative one-percent level of uncertainty in the amount of tritium produced in the lithium blanket, a country with 10 one-gigawatt fusion reactors would have as many as 12.5 kg of tritium unaccounted for each year, or enough for several thousand boosted weapons.

Thus, it would not be feasible to monitor and control tritium supplies down to the tiny levels required to boost a bomb.

Another problem during transition lies in the ever-increasing sophistication of advanced facilities to simulate the effects of the explosion of nuclear weapons at laboratories such as the US National Ignition Facility. As the technology for computerized simulation of nuclear weapons becomes more widespread, more countries will be able to build and design increasingly powerful boosted weapons without actual testing, and at a pace that would be much faster than before. Consequently, the number of high-yield nuclear weapons and the number of countries that own them could increase substantially during the period of overlap between the eras of fission and fusion.

As a result, if humanity does decide to have fusion play a major role in energy generation, it should complete the changeover swiftly, before the capacity to build boosted and thermonuclear weapons becomes widespread. Even if only a handful of countries have the technology, resources, and willpower to construct facilities for making boosted and thermonuclear weapons, their very existence is a liability.

Will Fusion be Affordable? Some physicists and engineers have expressed concerns that even if a fusion reactor that produced significantly more energy than it consumes could be built, it still might not be economically viable within the current pricing framework. While the costs of a demonstration fusion reactor are fairly well known, first-generation tokamak electrical plants could be unaffordable when compared to nuclear fission, conventional fossil fuels, and even renewables.

But economic viability depends upon one’s point of view. The price of a fusion reactor (or any product for that matter) contains significant negative or positive external costs, or “externalities,” which distort one’s perceptions of its value. For example, greenhouse gases emitted by fossil fuels carry significant externalities, in the form of carbon emissions into the atmosphere, although they are typically not included in the price of electricity that a customer sees on a monthly electric bill. Rather, future generations pay those costs. As long as carbon is not priced or heavily underpriced, fossil fuel technologies will continue to appear cheap compared to cleaner sources such as fusion.

However, even with these distortions, an extensive US study called ARIES-AT found that first-generation fusion reactors would be competitive with renewables and fossil fuel, even without a carbon tax. Since it is likely that such a carbon-pricing mechanism will be enacted by the time fusion reactors come online, there is a strong likelihood that fusion will be very economically attractive.

If by 2050 there is a choice between building large numbers of fast breeder reactors (which could clandestinely provide fission fuel for thousands of nukes per year), or emitting an extra several billion tons of carbon dioxide into the atmosphere, the decision will not be easy. To avoid this dismal choice, then widespread, commercially viable fusion is required—assuming there are no other viable technologies.

There comes a critical point in a civilization’s development when its resource and energy consumption are so great that exploiting the atom may well be the only way to maintain a high level of advance. While nuclear technology has the potential to terrify, dehumanize, and exterminate, if used wisely it also has the potential to liberate human civilization from the shackles of fossil fuels. A swift transition from fission to fusion not only would allow us to escape the worst medium- to long-term environmental and social ramifications of climate change, it also would enable the creation of a more stable and credible equilibrium in a world with no nuclear weapons. A world without nuclear weapons but with fissile material will always be in fragile equilibrium; a world without both would be far more sustainable.

Humanity’s past failure to wield the double-edged nuclear sword skillfully has been permanently etched into the annals of history, from the bombing of Hiroshima and Nagasaki, to the world build-up to 65,000 nuclear weapons, to dozens of serious near-misses, during which nuclear war was only narrowly averted. Reaching and navigating the fusion era, an advanced step in the progression of nuclear technology, would be a testament to humanity’s foresight and organization, as well as a catalyst for nuclear weapons abolition and the curtailing of the extremes of climate change.

Together, we make the world safer.

The Bulletin elevates expert voices above the noise. But as an independent nonprofit organization, our operations depend on the support of readers like you. Help us continue to deliver quality journalism that holds leaders accountable. Your support of our work at any level is important. In return, we promise our coverage will be understandable, influential, vigilant, solution-oriented, and fair-minded. Together we can make a difference.

Keywords: Nuclear Fusion Energy

Topics: Analysis, Climate Change, Fusion Energy, Nuclear Energy