2012: An elemental force: Uranium production in Africa, and what it means to be nuclear

By Gabrielle Hecht | December 7, 2020

2012: An elemental force: Uranium production in Africa, and what it means to be nuclear

By Gabrielle Hecht | December 7, 2020

Editor’s note: This article was originally published in 2012. Based largely on Hecht’s book, Being Nuclear: Africans and the Global Uranium Trade (MIT Press, 2012), the article draws on over a decade of research in corporate and national archives in Africa, Europe, and North America, as well as published sources and extensive interviewing. It is republished here as part of our special issue commemorating the 75th year of the Bulletin.

In January 2003, US President George W. Bush declared in his State of the Union address that “the British government has learned that Saddam Hussein recently sought significant quantities of uranium from Africa.” The intelligence, his administration insisted, was unequivocal: Iraq had tried to purchase 500 tons of yellowcake from Niger. Trust us, said officials, trotting out more questionable evidence: Saddam is building “the bomb.”

If the death toll were not so huge, the suffering and violence not so vast and ongoing, the next part of the story would be comical. The so-called evidence—procured by a shady Italian businessman—turned out to be forged. In fact, the forgeries were so inept that when International Atomic Energy Agency (IAEA) experts finally saw them in March, they immediately guessed the documents were fake and proved it within hours. By then it was too late, of course. The war had already begun.

As the story unfolded, layers of intrigue accumulated in the US press. Former diplomat Joseph Wilson wrote to the New York Times, describing his visit to Niger and discrediting Bush’s claims. The administration retaliated by outing Wilson’s wife, CIA operative Valerie Plame. The media set out to “follow the yellowcake road,” and did so by focusing on Americans—Wilson, Plame, and various Bush officials—rather than on the actual heart of the story: The transmutation of “uranium from Africa” into “atom bomb for Iraq,” an alchemy that—still today—most people don’t question. The (nonexistent) 500 tons of yellowcake became the most visible element of the dubious evidence concerning Iraqi atomic bomb efforts.

So what explains the power of the phrase “uranium from Africa”? Why did the claim work so well from a political and cultural perspective? Had the forged evidence concerned Kazakhstan—another major producer—would the administration have talked about “uranium from Asia”? Highly unlikely. In mainstream Western political imagination and media, Africa remains the Dark Continent, mysterious and politically corrupt—plausible qualifications for a nuclear supplier. And what better candidate for shady dealings than Niger, a nation most Americans couldn’t distinguish from Nigeria? Consider also the assumption that acquisition of uranium would constitute prima facie evidence of a bomb program’s existence. Before uranium becomes weapons-usable, it must be mined as ore, processed into yellowcake, converted into uranium hexafluoride, enriched, and pressed into bomb fuel. “Uranium” is therefore as underspecified technologically as “Africa” is underspecified politically.

The Niger episode reflects the ambiguities of the nuclear state, and the state of being nuclear. But what exactly is a nuclear state? Does a uranium enrichment program suffice to make one of Iran, as its president Mahmoud Ahmadinejad has claimed? Or are atomic bomb tests the deciding factor? Such ambiguities cannot be dismissed as doublespeak or grandiose ranting. They matter too much to be discounted so easily.

The nuclear status of uranium is an important aspect of these ambiguities. When does uranium count as a nuclear substance? When does it lose that status? And what does Africa have to do with it? Such issues lie at the heart of today’s global nuclear order. Or disorder, as the case may be. The questions themselves sound deceptively simple. Understanding their significance and scope requires knowing their history.

The state of being nuclear

The atom bomb has become the ultimate fetish of our times (Abraham, 1998). World order has been created and challenged in its name and for its sake. Salvation and apocalypse, sex, and death: The bomb’s got it all. In the two decades following World War II, the bomb became the ultimate political trump card, first for the superpowers (the United States in 1945, the Soviet Union in 1949); then for waning colonial powers (the United Kingdom in 1952, France in 1960). Other nations soon followed: China in 1964 and Israel in the mid-1960s. Geopolitical status seemed directly proportional to the number of nukes a nation possessed.

Although more than 22,000 nuclear warheads now populate the planet, they somehow retain their singularity. We still hear about the bomb. The implication is that nuclear things are unique, different in essence from ordinary things. I call this insistence on an essential nuclear difference—manifested in political claims, technological systems, cultural forms, institutional infrastructures, and scientific knowledge—“nuclear exceptionalism.”

As a recurring theme in public discourse since 1945, nuclear exceptionalism often transcended political divisions, allowing both Cold Warriors and their activist opponents to portray atomic weapons as fundamentally different from any other human creation. Nuclear scientists and engineers enjoyed far more prestige, power, and funding than their “conventional” colleagues. Anti-nuclear groups highlighted the unprecedented qualitative and quantitative dangers posed by exposure to radiation. The rupture in nature’s very building blocks, wrought during fission, propelled claims of a corresponding rupture in historical space and time. Morality-speak inevitably accompanied debates, rendering nuclear things either sacred or profane.

Yet whatever the political leaning, exceptionalism expressed the sense that an immutable ontology distinguished the nuclear from the non-nuclear. The difference between nuclear and non-nuclear came down to fission and radioactivity—or so it seemed. The nuclear was thus rendered as a universal and universalizing ontology—one that applied in the same way, all over the globe.

Scholars and policy makers have tended to follow suit. This has made it difficult to understand “nuclearity”— that is, how places, objects, or hazards get designated as nuclear. Designating something as nuclear—whether in technoscientific, political, or medical terms—carries high stakes. Fully understanding those stakes requires layering stories that are usually kept distinct: atomic narratives and African ones, histories of markets and histories of health.

Things that count as nuclear at one time and place may not count as such at another. Rendering something—a state, an object, an industry, a workplace—as nuclear and therefore exceptional is a form of technopolitical claims-making. And so is the obverse: namely, insisting that certain things are not especially nuclear and are hence banal.

But nuclearity cannot be understood as a transparent ontological distinction. Instead, it should be treated as a contested technopolitical category. Nuclearity is not so much an essential property of things as it is a property distributed among things. Radiation matters, but its presence does not suffice to turn mines into nuclear workplaces. After all, as the nuclear industry is quick to point out, people absorb radiation all the time by eating bananas, sunbathing, or flying over the North Pole. For a workplace to fall under the purview of agencies that monitor and limit exposure, the radiation must be human-made, rather than natural. But is radiation emitted by underground rocks natural (as mine operators sometimes argued) or human-made (as occupational health advocates maintained)? The nuclearization of uranium—and of its mines—requires work: work that is at once scientific, technological, political, and cultural.

Nuclearity is something achieved, which also means that it can be undone. Put differently: Radiation is a physical phenomenon that exists independently of how it’s detected or politicized. Nuclearity is a technopolitical phenomenon that emerges from political and cultural configurations of technical and scientific things. It is not the same everywhere, it is not the same for everyone, and it is not the same at all moments in time.

Consider that in 2003, when Bush made his speech, yellowcake from Niger made Iraq nuclear. But in 1995, yellowcake from Niger didn’t make Niger itself nuclear. According to a major US government report on proliferation that year, Niger, Gabon, and Namibia had no “nuclear activities” (Office of Technology Assessment, 1995: Appendix B). Yet together these nations accounted for more than one-fifth of the uranium that fueled power plants in Europe, the United States, and Japan that year. For decades, experts had noted that workers in uranium mines were “exposed to higher amounts of internal radiation than & workers in any other segment of the nuclear energy industry” (Holaday, 1964: 51). But neither workers’ radiation exposures nor its role in the global nuclear power industry was enough to render uranium mining in these countries a nuclear activity.

Making uranium not nuclear

To understand when uranium counts as a nuclear thing, when it loses its nuclearity—and what Africa has to do with that distinction—it is worth revisiting 1957, the year the IAEA was founded. Writing the agency’s governing statute involved discussions over which countries would secure permanent seats on its Board of Governors. Knowing that international opposition to apartheid could prevent its election to the board, South Africa engaged in a strong lobbying effort. It wanted to influence the emergence of a uranium market: Its uranium contracts with the United States and the United Kingdom would soon draw to a close, and it needed new customers. In the thick of the Suez crisis, South Africa seemed more palatable than Egypt or Israel as the board representative from the so-called Africa and Middle East region. On the strength of its uranium production, South Africa won the seat.

Barely a decade later, however, uranium mines lost their nuclear status. The IAEA’s 1968 safeguards document specifically excluded mines and mills from the classification of “principal nuclear facility,” defined as “a reactor, a plant for processing nuclear material irradiated in a reactor, a plant for separating the isotopes of a nuclear material, [or] a plant for processing or fabricating nuclear material (excepting a mine or ore-processing plant).” The 1972 safeguards document further excluded uranium ore from the category of “source material.”1 International authorities thus did not consider uranium as nuclear until it became feed for enrichment plants or fuel for reactors.

Excluding uranium ore and yellowcake from the category of nuclear things meant that mines and yellowcake plants were formally excluded from the Nuclear Non-Proliferation Treaty’s (NPT) safeguards and inspection regimes. Uranium’s nuclearity plummeted because several uranium-producing countries—most notably, apartheid South Africa—actively lobbied for such an exclusion starting in the late 1960s. South Africa, for one, now had other nuclear facilities and didn’t need mines to qualify as a “nuclear” nation.

The drive to denuclearize was driven by the desire to commodify. Freedom from direct inspections opened the possibility of treating uranium (in yellowcake form) not as an exceptionally nuclear thing that had to be carefully monitored, but rather as a banal commodity like any other—one that could (in principle) be bought and sold without government or international oversight.

The uranium boom in Africa

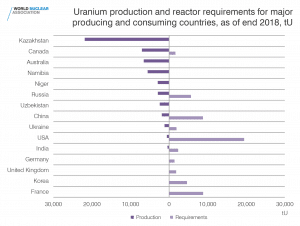

Yellowcake from Niger may not have entered Iraq in 2002, but uranium from Africa was, and remains, a major source of fuel for atomic weapons and power plants throughout the world. Uranium for the Hiroshima bomb came from the Belgian Congo. During any given year of the Cold War, between 20 percent and 50 percent of the Western world’s uranium came from African nations: Congo, Niger, South Africa, Gabon, Madagascar, and Namibia.

In the last few years, several African countries have experienced a uranium boom. The driving force behind this is demand—or, more precisely, expectations of demand. Embracing climate change concerns, the nuclear industry has offered visions of a “nuclear renaissance” free of greenhouse gases. Nuclear power began to seduce African governments, several of which expressed the desire for nuclear reactors to address their energy needs. More significant for African uranium production in the immediate future, though, is China’s dramatic nuclear power expansion, which calls for some 10,000 tons of uranium oxide to attain its 2020 target. To meet this demand, Chinese companies have been prospecting for uranium in Niger, Namibia, and Zimbabwe (as well as Mongolia, Uzbekistan, and Kazakhstan). China’s main competitor is Areva, the French parastatal corporation that took over management and development of France’s nuclear fuel cycle in 2001.

Areva continues to control most of Niger’s uranium production, though Chinese companies have made inroads. Somina, a joint venture controlled by majority Chinese capital, has begun to mine the Azelik deposit in Niger’s Agadez region. Even before the first ton of uranium emerged from the ground, workers and nongovernmental organizations (NGOs) protested the low pay, bad housing, dangerous working conditions, and poor sanitation. Meanwhile, uranium companies have been at the centerpiece of the struggle between Tuareg rebels and the national Nigerien government. On several occasions in the last five years, rebels have kidnapped Chinese and French uranium employees, using the hostages as leverage in their struggle over resources and rights in the north of the country.

Many in Africa’s political elites relish the competition among uranium seekers from China, France, and other countries. Oceans away, investors in North America, Europe, and Australia enthusiastically embrace uranium’s potential for profit. Pre-Fukushima, fantasies about a nuclear renaissance pushed up the spot price of uranium oxide: The price of a pound of uranium climbed from $16 in January 2004 to $26 in 2005, $37 in 2006, then $75 by January 2007. In May 2007, the New York Mercantile Exchange opened the first futures market for uranium. In June 2007, the month after futures trading began, the spot price hit $136 per pound before tapering down to its 2010 low of about $40 per pound.

Nuclearity in Africa: Commodification in Niger, occupational health in Gabon

This denuclearization of uranium ore and yellowcake had all sorts of implications throughout Africa, many of them quite negative for the global nonproliferation regime and for Africans. These implications can be grasped by looking at two countries: Niger, where uranium mining took off in the 1970s, and Gabon, where uranium mines operated from the late 1950s to the late 1990s and which is only now dealing with the health and environmental legacy of that mining. In both countries, uranium mining was launched by the Commissariat à l’Énergie Atomique (CEA), which managed France’s nuclear fuel cycle until the creation of Areva’s predecessor, Cogéma, in 1976.2

Niger. Niger gained its independence from France in 1960, but the CEA remained in the country to develop newly found uranium deposits. The agency created two companies to conduct mining, each allocating a portion of its capital to the Nigerien state (25 percent in one case, 32 percent in the other).

Hamani Diori, Niger’s first post-colonial president, sought to maximize the benefits of these deposits in order to secure his nation’s sovereignty over its natural resources. He and his advisers kept close track of nuclear debates and developments in France. They fully grasped France’s desire for national nuclear exceptionalism (Hecht, 2009) and explicitly linked Niger’s uranium to France’s nuclear interests. Diori insisted that two successive French presidents acknowledge, in writing, the special significance of uranium-related transactions, then used this as leverage to ensure that uranium revenues and prices were discussed as matters of state diplomacy, rather than matters for corporate negotiation.

Inspired by the Organization of Petroleum Exporting Countries’ (OPEC) 70 percent increase in the price of crude oil in 1973, Diori sought similar leverage over the price of uranium. In 1974, he tried to emulate OPEC’s model with the help of Gabon, France’s other main uranium producer. Under the rubric of nuclear exceptionalism, Niger’s representatives argued that “the content of uranium transcended commercialism.”3 They reasoned that if Niger could contribute to the exceptional nuclearity of France, then surely France could make exceptional contributions to the economic development of Niger—notably, by paying a price for uranium that reflected its political, nuclear significance.

In response, the French delegation sought to denuclearize uranium by insisting on the banality of the market. Drawing upon IAEA definitions of what did and didn’t count as a nuclear material—and upon various market devices that uranium mining corporations and international agencies used to convert uranium into a sellable commodity—the French insisted that the only possible way to determine the value of uranium was to treat it like an ordinary market commodity.

Trilateral discussions were interrupted when Diori was ousted by a military coup in April 1974. His successor, Seyni Kountché, went for a different sort of market arrangement. Kountché negotiated an agreement that entitled Niger to sell—directly and independently—a proportion of yellowcake output equal to the percentage of its capital holdings in the mining companies. This in turn freed Niger to develop a customer list that many Western governments would find increasingly dangerous.

Under Kountché, the state found it more lucrative to plunge directly into the uranium market. National and regional issues mattered far more to Niger’s leaders than Cold War superpower politics. For example, in 1981—not long after Libya’s attempt to annex Chad—Kountché declared that Niger needed funds so badly that “if the devil asks me to sell him uranium today, I will sell it to him” (the devil, in this rendition, being none other than Muammar Qaddafi) (Yemma, 1981: 8).

Reliable, accessible sources on yellowcake contracts signed by Niger are scant. But most agree that customers for Niger’s portion of uranium between the mid-1970s and the mid-1980s included Iraq (around 300 tons in 1981—not the infamous 500 tons claimed in 2003), Libya (perhaps up to 2,700 tons), and Pakistan (around 500 tons in 1979, routed secretly through Libya, and perhaps more in the mid-1980s).4 The denuclearization of uranium—its treatment as an ordinary commodity—thus posed a threat to the world order embodied by the NPT.

Gabon. The COMUF (Uranium Mining Company of Franceville) uranium mine began operations in Mounana, Gabon, in 1957, three years before the French colony gained its independence. The mine was a joint venture between the French CEA and Mokta, a colonial mining company. COMUF’s first director, Xavier des Ligneris, was a CEA executive who had spent years running uranium mines in France. In Gabon, he tried to follow radiation protection protocols used in his home country, which included issuing film badges to workers to track their gamma exposures and ambient dosimetry to track radon.

Almost immediately, the monitoring system recorded high levels of both gamma and alpha radiation—sometimes up to 12 times the maximum permissible levels (MPLs) established by French regulatory bodies. Upgrades to the mine’s ventilation system temporarily corrected the problem, but they were costly. In 1968, Mokta replaced des Ligneris with its own man, Christian Guizol, who was more attuned to budget constraints. When both types of radiation exposure climbed in late 1968, Guizol reconfigured the calculus of exposure by simply raising the MPLs, the thresholds beyond which exposure became over-exposure. He noticed that—when applied to the specific conditions that operated at COMUF—International Labour Organization guidelines were less restrictive than those used in France. And so, after a few numerical gymnastics, Guizol enacted the equivalent of a three-fold increase in MPLs. The effect was immediate: In December 1969, 56 workers exceeded threshold exposure levels. By March 1970, not a single worker exceeded the new, higher limits.5

Around the same time, a shift boss named Marcel Lekonaguia, who was in charge of blasting in the uranium mines, was beginning to contest management’s complacency about the health effects of uranium work. He insisted that inhaled uranium dust had led to a persistent cough and assorted other ailments that would plague him for the rest of his life.

Lekonaguia also wondered about the film badges that workers wore—and especially the tight discipline that the company exerted in regard to them. In 1998, I met Lekonaguia in his home near Mounana. All he ever found out, he told me, was whether he’d reached some limit that would cause him to be moved. He never found out what the numbers were, how close to the limit he was, or how much he’d accumulated over time. His brother, Dominique Oyingha, became convinced that the company was hiding something and that the Gabonese state was complicit.

After visiting independent doctors in Congo who told them that Lekonaguia’s ailments were work related, the brothers appealed to the Gabonese government for help. Lekonaguia was granted some sick leave, but he wanted more: He wanted permanent leave and compensation. The company refused. The mine doctor refused to release his medical records, citing professional secrecy. In protest, Lekonaguia refused to turn in his film badge. From Lekonaguia’s perspective, the films didn’t just record radiation—they were also witnesses to his illness. He hoped that someday he would find someone to read his diagnosis—along with the chain of causality that linked work to illness—directly from the films. He may not have been alone in this belief. In the mid-1980s, COMUF’s quarterly radiation protection reports showed that in some months, as many as 25 percent of workers did not turn in their radiation-detection badges.

Many COMUF workers remained suspicious about their occupational health status well after the mine shut down in 1999. Complaining that remediation work focused only on containing loose ore left behind by the mining activities and not on people’s health, former workers sought a medical nuclearity for their work. Inspired by reports of an NGO that addressed illnesses in still very active Nigerien uranium mines, a group of Mounana residents formed their own NGO, advocating for a health and environmental monitoring program as well as medical compensation. They joined forces with several French NGOs, which together sent a small team of scientists, doctors, and lawyers to Mounana in June 2006.6

The team took independent environmental readings and interviewed nearly 500 former COMUF employees about their health and work experience. Most employees reported no formal training on radiation or radon-related risks and no feedback on their monthly dosimetric readings. They asserted that the Gabonese state had done nothing to monitor working conditions or occupational health. One former medical doctor testified that company clinicians had no training in uranium-related occupational health and that the company’s radiation protection division consistently refused to transmit dosimetric readings to the medical division. Residents displayed a range of symptoms, many lung-related, but their illnesses remained undiagnosed and untreated. The team’s report—which included similar findings for Nigerien uranium mines—was released at a much-publicized press conference in Paris in April 2007 (Daoud and Getti, 2007). The survey responses echoed narratives I heard in my own research.

Areva responded by promising to install “health observatories” in Gabon and Niger. These institutions, however, were a far cry from remediation and compensation. In 2009, two documentaries that aired on French television raised the public relations stakes: One showed radioactive contamination produced by uranium mining in France itself; the second documented the various problems identified by NGOs in uranium mines in Gabon and Niger.

In June 2009, two French NGOs announced an agreement with Areva. The corporation and the NGOs would form a joint committee that would not only name experts to oversee the health observatories, but also define protocols for data collection, analyze results obtained by all the health observatories, and make proposals to improve the environment at the sites. Parties to the agreement celebrated, declaring in a joint press release: “Areva’s openness to dialogue testifies to its willingness to respond to the worries of citizens who are ever-better informed and advised. By allowing itself to engage in discussions with Areva, civil society, for its part, shows that an accord can have a constructive impact on local populations” (Areva, 2009).

But other NGOs were skeptical that the agreement would result in prompt, effective remediation. Even less promising was the fact that no residents or workers were included in the committee, a striking oversight given how little COMUF workers trusted the state. Nevertheless, Mounana residents obtained a modest victory in March 2011, when Areva agreed to demolish 200 houses with extremely elevated radon levels. (They’d been built with mine tailings, much like houses in Grand Junction, Colorado, in the 1960s.) But the resumption of uranium prospecting in southeastern Gabon leads area residents to suspect renewed collusion between the corporation and the state. In mid-2011, the doctor in charge of examining former workers told a radio producer that she couldn’t reach any conclusions because she didn’t have national databases—most notably, cancer registries—against which to compare the health of former workers (Strauss, 2011). A similar situation exists among uranium communities in Niger.

So far, then, this unprecedented agreement to deal with health problems in former or current uranium production sites has done little to help irradiated workers in Gabon (or Niger, where uranium mines remain active). Until tensions surrounding the relationship between knowledge production and public representation are resolved, any nuclearity produced by the observatories is unlikely to have significant political, medical, or economic significance for workers and their communities. They may have suffered radiation-related injuries, but unless the injuries are brought into a nuclear framework—one that includes not only exposure records, but also a means of linking those records to global knowledge systems, individual health diagnoses, compensation mechanisms, and more—former and current workers may get little in the way of relief or compensation.

Why the nuclear designation matters

The IAEA consensus in the 1970s—that uranium mining was not an inherently “nuclear” activity—paved the way for buyers and sellers to treat uranium oxide like an ordinary market commodity. Because of this, Niger’s uranium companies were able to ship significant amounts of yellowcake to countries whose nuclear activities weren’t approved by the world’s nonproliferation regime.

Meanwhile, in Gabon, Niger, and many other African countries, public health infrastructures shaped by colonialism, missionary work, mineral extraction, and other interests have focused on infectious disease, malnutrition, and fertility. This focus has influenced which health statistics are collected, and has resulted in a widespread absence of national cancer and tumor registries (Livingston, forthcoming). This, in turn, has made it nearly impossible to track the health effects of uranium mining. How could anyone know whether uranium has caused excess cancer without data establishing the baseline cancer rate?

The scientific question—does radon exposure cause cancer?—is always one of history and geography. It has no single, simple answer outside the politics of expert controversy, labor organization, capitalist production, or colonial difference and history. As an epistemological question, it is fundamentally about the relationship of the past to the future. As a political question, it is about laying claim to (or withholding) resources. For workers, of course, these two aspects of the question are inseparable. Their question then becomes: When and how can the universalizing claims of nuclearity work for them?

The question is more salient now than ever. Although the Fukushima accident has led to a pause in some excavations, many large mining projects are proceeding apace in Namibia, Niger, and Malawi. In other countries with identified uranium deposits (such as Tanzania and the Central African Republic), state officials appear keen to proceed with mining.

Eager to do business, mine operators—backed by state officials—pit the immediate urgency of development against the long-term uncertainties of contamination. Namibia has a fledgling regulatory system, but the other countries mostly lack the complex infrastructures required to monitor and mitigate exposures. How much would the price of uranium rise if it incorporated the full cost of nuclearity in Africa? That remains to be calculated.

This article is based on research funded by the National Science Foundation (awards SES-0237661 and SES-0848568), the National Endowment for the Humanities, the American Council for Learned Societies, and the University of Michigan.

Together, we make the world safer.

The Bulletin elevates expert voices above the noise. But as an independent nonprofit organization, our operations depend on the support of readers like you. Help us continue to deliver quality journalism that holds leaders accountable. Your support of our work at any level is important. In return, we promise our coverage will be understandable, influential, vigilant, solution-oriented, and fair-minded. Together we can make a difference.

Keywords: Areva, COMUF, Gabon, Niger, archive75, nuclear exceptionalism, nuclearity, uranium, yellowcake

Topics: Nuclear Risk