ITER is a showcase … for the drawbacks of fusion energy

By Daniel Jassby | February 14, 2018

Nuclear fission power plants in Cattenom, France. Image courtesy Electricite de France/Anthony Fausser

Nuclear fission power plants in Cattenom, France. Image courtesy Electricite de France/Anthony Fausser

A year ago, I wrote a critique of fusion as an energy source, titled “Fusion reactors: Not what they’re cracked up to be.” That article generated a lot of interest, judging from the more than 100 reader comments it generated. Consequently, I was asked to write a follow-up and continue the conversation with Bulletin readers.

But first, some background, for the benefit of those just coming into the room.

I am a research physicist, who worked on nuclear fusion experiments for 25 years at the Princeton Plasma Physics Lab in New Jersey. My research interests were in the areas of plasma physics and neutron production related to fusion-energy research and development. Now that I have retired, I have begun to look at the whole fusion enterprise more dispassionately, and I feel that a working, everyday, commercial fusion reactor would cause more problems than it would solve.

So I feel obligated to dispel some of the gee-whiz hyperbole that has sprung up around fusion power, which has been routinely heralded as the “perfect” energy source and touted all too often as the magic bullet solution to the world’s energy problems. Last year’s essay made the case that the endlessly proclaimed features of energy perfection (usually “inexhaustible, cheap, clean, safe, and radiation-free”) are all debunked by harsh realities—and that a fusion reactor would actually be close to the opposite of an ideal energy source. But that discussion largely involved the characteristic drawbacks of conceptual fusion reactors, which fusion proponents continue to insist will somehow, someday, be surmounted.

Now, however, we are at a point where, for the first time, we caninvestigate a prototypical fusion reactor facility in the real world: the International Thermonuclear Experimental Reactor (ITER), under construction in Cadarache, France. Even if actual operation is still years away, the ITER project is sufficiently advanced that we can examine it as a test case for the doughnut-shaped design known as the tokamak—the most promising approach to achieving terrestrial fusion energy based on magnetic confinement. In December 2017, the ITER project directorate announced that 50 percent of the construction tasks had been accomplished. This important milestone offers considerable confidence in the eventual completion of what will be the only installation on Earth that even remotely resembles what is supposed to be a practical fusion reactor. As the New York Times wrote, this facility “is being built to test a long-held dream: that nuclear fusion, the atomic reaction that takes place in the sun and in hydrogen bombs, can be controlled to generate power.”

Plasma physicists regard ITER as the first magnetic confinement device that can possibly demonstrate a “burning plasma,” where heating by alpha particles generated in fusion reactions is the dominant means of maintaining the plasma temperature. That condition requires that the fusion power be at least five times the external heating power applied to the plasma. Although none of this fusion power will actually be converted to electricity, the ITER project is mainly touted as a critical step along the road to a practical fusion power plant, and that claim is our concern here.

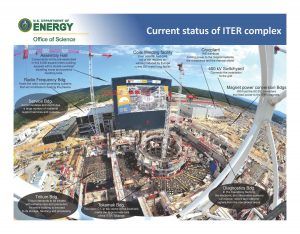

Let us see what can be deduced about some possibly irremediable drawbacks of fusion facilities by observing the ITER endeavor, concentrating on four areas: electricity consumption, tritium fuel losses, neutron activation, and cooling water demand. The physical layout of this $20-to-30 billion project is displayed in the photograph below.

A misguided motto. On the ITER website one is greeted by the proclamation “Unlimited Energy,” which is also the battle cry of fusion enthusiasts everywhere. The irony of this slogan is apparently lost on project staff and not suspected by the public. But anyone following the construction at the ITER site in the last five years—and it is easily followed by detailed photographs and descriptions on the project website—would have been struck by the tremendous amount of invested energy.

The website implicitly boasts of this massive energy investment, depicting every one of the ITER subsystems as the most stupendous of its kind. For example, the cryostat, or liquid-helium refrigerator, is the world’s largest stainless steel vacuum vessel, while the tokamak itself will weigh as much as three Eiffel towers. The total weight of the central ITER facility is around 400,000 tons, of which the heaviest components are 340,000 tons for the foundations and buildings of the tokamak complex, and 23,000 tons for the tokamak itself.

But boosters should be distressed rather than ecstatic, because biggest and greatest means big capital outlay and great energy investment, which must appear on the negative side of the energy accounting ledger. And this energy has been largely provided by fossil fuels, leaving an unfathomably large “carbon footprint” for site preparation and construction of all the supporting facilities, as well as the reactor itself.

At the reactor site, fossil-fuel-powered machines excavate huge volumes of earth to a depth of 20 meters and manufacture and install countless tons of concrete. Some of the world’s largest trucks (powered by fossil fuels) convey mammoth reactor components to the assembly site. Fossil fuels are burned in the extracting, transporting, and refining of the raw materials needed to make fusion reactor components and possibly in the manufacturing process itself.

One may wonder how that expended energy could ever be paid back—and of course it won’t. But the very visible embodiment of the tremendous energy investment represents only the first component of the ironic “Unlimited Energy.”

Adjacent to these buildings is a 10-acre electrical switchyard with massive substations handling up to 600 megawatts of electricity, or MW(e), from the regional electric grid, which is enough to supply a medium-sized city. This power will be needed as input to supply ITER’s operating needs; no power will ever flow outward, because ITER’s internal construction makes it impossible to convert fusion heat to electricity. Remember that ITER is a test facility designed purely to show proof of concept as to how engineers can mimic the inner workings of the sun to join atoms together in the real world in a controlled manner; ITER is not intended to generate electricity.

The electrical substation hints at the vast amount of energy that will be expended in operating the ITER project—and indeed every large fusion facility. As pointed out in my previous Bulletin story, fusion reactors and experimental facilities must accommodate two classes of electric power drain: First, a host of essential auxiliary systems such as cryostats, vacuum pumps, and building heating, ventilation and cooling must be maintained continuously, even when the fusion plasma is dormant. In the case of ITER, that non-interruptible power drain varies between 75 and 110 MW(e), wrote J.C. Gascon and his co-authors in their January 2012 article for Fusion Science & Technology, “Design and Key Features for the ITER Electrical Power Distribution.”

The second category of power drain revolves directly around the plasma itself, whose operation is in pulses. For ITER, at least 300 MW(e) will be required for tens of seconds to heat the reacting plasma and establish the requisite plasma currents. During the 400-second operating phase, about 200 MW(e) will be needed to maintain the fusion burn and control the plasma’s stability.

Even during the next eight years of plant construction and shakedown, the on-site power consumption will average at least 30 MW(e), adding to the invested energy and serving as a forerunner of the non-interruptible site power drain.

But much of the information about power drains—and the distinctions between ITER’s expected generation of heat instead of electricity—has gotten lost when the project was described to the public.

Energy enlightenment. Recently, the website New Energy Times presented a well-documented account, “The ITER power amplification myth,” about how the facility’s communications department disseminated poorly worded information about the ITER power balance and misled the news media. A typical widespread statement is that “ITER will produce 500 megawatts of output power with an input power of 50 megawatts,” implying that both numbers refer to electric power.

New Energy Times makes it clear that the expected 500 megawatts of output refers to fusion power (embodied in neutrons and alphas)—which has nothing to do with electric power. The input of 50 MW referred to here is the heating power injected into the plasma to help sustain its temperature and current, and it’s only a small fraction of the overall electric input power to the reactor. The latter varies between 300 and 400 MW(e), as explained earlier.

The New Energy Times technical critique is essentially valid and draws attention to the colossal electrical power demanded by any fusion facility. In fact, it has always been recognized that a huge amount of energy is required to start up any fusion system. But tokamak fusion systems also require an unceasing hundreds of megawatts of electric power just to keep them going. In an apparent response to criticism from New Energy Times, the ITER website and other outlets such as Eurofusion have corrected some misleading statements with regard to power flow.

Yet there are far more serious issues with ITER’s advertised operation than the misleading labeling of projected input and output powers. While the input electric power of 300 MW(e) and more is indisputable, a fundamental question is whether ITER will produce 500 MW of anything, a query that revolves around the vital tritium fuel—its supply, the willingness to use it, and the campaign needed to optimize its performance. Other misconceptions involve the actual nature of the fusion product

Tritium tribulations. The most reactive fusion fuel is a 50/50 mixture of the hydrogen isotopes deuterium and tritium; this fuel (often written as “D-T”) has a fusion neutron output 100 times that of deuterium alone, and a spectacular increase in radiation consequences.

Deuterium is abundant in ordinary water, but there is no natural supply of tritium, a radioactive nuclide with a half-life of only 12.3 years. The ITER website states that the tritium fuel will be “taken from the global tritium inventory.” That inventory consists of tritium extracted from the heavy water of CANDU nuclear reactors, located mainly in Ontario, Canada, and secondarily in South Korea, with a potential future source from Romania. Today’s “global inventory” is approximately 25 kilograms, and increases by only about one-half kilogram per year, notes Muyi Ni and his co-authors in their 2013 journal article, “Tritium Supply Assessment for ITER,” in Fusion Engineering and Design. The inventory is expected to peak before 2030.

While fusioneers blithely talk about fusing deuterium and tritium, they are in fact intensely afraid of using tritium for two reasons: First, it is somewhat radioactive, so there are safety concerns connected with its potential release to the environment. Second, there is unavoidable production of radioactive materials as D-T fusion neutrons bombard the reactor vessel, requiring enhanced shielding that greatly impedes access for maintenance and introducing radioactive waste disposal issues.

In 65 years of research involving hundreds of facilities, only two magnetic confinement systems have ever used tritium: the Tokamak Fusion Test Reactor at my old stomping grounds at the Princeton Plasma Physics Lab, and the Joint European Tokamak (JET) at Culham, UK, way back in the 1990s.

ITER’s present plans call for the acquisition and consumption of at least 1 kilogram of tritium annually. Assuming that the ITER project is able to acquire an adequate supply of tritium and is brave enough to use it, will 500 MW of fusion power actually be achieved? Nobody knows.

“First plasma” at ITER is supposed to occur in 2025. That will be followed by a relatively subdued 10 years of continued machine assembly and periodic plasma operations with hydrogen and helium. These gases produce no fusion neutrons, and thereby permit the resolution of shakedown problems and optimization of plasma performance with minimal radiation hazards. Plasma instabilities must be kept at bay to ensure adequate energy confinement, so the reacting plasma can be heated and maintained at high temperature. Influxes of non-hydrogenic atoms must be curtailed.

ITER’s schedule calls for deuterium and tritium use beginning in the late 2030s. But there’s no guarantee of hitting the 500 MW target; generating fusion power in large quantities depends, among other things, on developing the optimal recipe of deuterium and tritium injection by frozen pellets, particle beams, gas puffing, and recycling. During the unavoidable teething stage through the early 2040s, it’s likely that ITER’s fusion power will be only a fraction of 500 MW, and that more injected tritium will be lost by non-recovery than burned (i.e., fused with deuterium).

Analyses of D-T operation in ITER indicate that only 2 percent of the injected tritium will be burned, so 98 percent of the injected tritium will exit the reacting plasma unscathed. While a high proportion simply flows out with the plasma exhaust, much tritium must be continually scavenged from the surfaces of the reaction vessel, beam injectors, pumping ducts, and other appendages for processing and re-use. During their several dozen traverses of the Tritium Trail of Tears around the plasma, vacuum, reprocessing and fueling systems, some tritium atoms will be permanently trapped in the vessel wall and in-vessel components, and in plasma diagnostic and heating systems.

The permeation of tritium at high temperature in many materials is not understood to this day, as R. A. Causey and his co-authors explained in “Tritium barriers and tritium diffusion in fusion reactors.” The deeper migration of some small fraction of the trapped tritium into the walls and then into liquid and gaseous coolant channels will be unpreventable. Most implanted tritium will eventually decay, but there will be inevitable releases into the environment via circulating cooling water.

Designers of future tokamak reactors commonly assume that all the burned tritium will be replaced by absorbing the fusion neutrons in lithium completely surrounding the reacting plasma. But even that fantasy totally ignores the tritium that’s permanently lost in its globetrotting through reactor subsystems. As ITER will demonstrate, the aggregate of unrecovered tritium may rival the amount burned and can be replaced only by the costly purchase of tritium produced in fission reactors.

Radiation and radioactive waste from fusion. As noted earlier, ITER’s anticipated 500 MW of thermal fusion power is notelectric power. But what fusion proponents are loathe to tell you is that this fusion power is not some benign solar-like radiation but consists primarily (80 percent) of streams of energetic neutrons whose only apparent function in ITER is to produce huge volumes of radioactive waste as they bombard the walls of the reactor vessel and its associated components.

Just 2 percent of the neutrons will be intercepted by test modules for investigating tritium production in lithium, but 98 percent of the neutron streams will simply smash into the reactor walls or into devices in port openings.

In fission reactors, at most 3 percent of the fission energy appears as neutrons. But ITER is akin to an electrical appliance that converts hundreds of megawatts of electric power into neutron streams. A peculiar feature of D-T fusion reactors is that the overwhelming preponderance of thermal energy is not produced in the reacting plasma, but rather inside the thick steel reactor vessel as the neutron streams smash into it and gradually dissipate their energy. In principle, this thermalized neutron energy could somehow be converted back to electricity at very low efficiency, but the ITER project has opted to avoid addressing this challenge. That is a task deferred to delusions called demonstration reactors that fusion proponents hope to deploy in the second half of the century.

A long-recognized drawback of fusion energy is neutron radiation damage to exposed materials, causing swelling, embrittlement and fatigue. As it happens, the total operating time at high neutron production rates in ITER will be too small to cause even minor damage to structural integrity, but neutron interactions will still create dangerous radioactivity in all exposed reactor components, eventually producing a staggering 30,000 tons of radioactive waste.

Surrounding the ITER tokamak, a monstrous concrete cylinder 3.5 meters thick, 30 meters in diameter and 30 meters tall called the bioshield will prevent X-rays, gamma rays and stray neutrons from reaching the outside world. The reactor vessel and non-structural components both inside the vessel and beyond up to the bioshield will become highly radioactive by activation from the neutron streams. Downtimes for maintenance and repair will be prolonged because all maintenance must be performed by remote handling equipment.

For the much smaller Joint European Torus experimental project in the United Kingdom, the radioactive waste volume is estimated at 3,000 cubic meters, and the decommissioning cost will exceed $300 million, according to the Financial Times. Those numbers will be dwarfed by ITER’s 30,000 tons of radioactive wastes. Fortunately, most of this induced radioactivity will decay in decades, but after 100 years some 6,000 tons will still be dangerously radioactive and require disposal in a repository, says the “Waste and Decommissioning” section of ITER’s Final Design Report.

Periodic transport and off-site disposal of radioactive components as well as the eventual decommissioning of the entire reactor facility are energy-intensive tasks that further expand the negative side of the energy accounting ledger.

Water world. Torrential water flows will be needed to remove heat from ITER’s reactor vessel, plasma heating systems, tokamak electrical systems, cryogenic refrigerators, and magnet power supplies. Including fusion generation, the total heat load could be as high as 1,000 MW, but even with zero fusion power the reactor facility consumes up to 500 MW(e) that eventually becomes heat to be removed. ITER will demonstrate that fusion reactors would be much greater consumers of water than any other type of power generator, because of the huge parasitic power drains that turn into additional heat that needs to be dissipated on site. (By “parasitic,” we mean consuming a chunk of the very power that the reactor produces.)

Cooling water will be taken from the Canal de Provence formed by channeling the Durance River, and most heat will be discharged into the atmosphere by cooling towers. During fusion operations, the combined flow rate of all the cooling water will be as large as 12 cubic meters per second (180,000 gallons per minute), or more than one-third the flow rate of the Canal. That level of water flow can sustain a city of 1 million residents. (But the actual demand on the Canal’s water will be only a very small faction of that value because ITER’s power pulse will be just 400 seconds long with at most 20 such pulses daily, and ITER’s cooling water is recirculated.)

Even while ITER is producing nothing but neutrons, its maximum coolant flow rate will still be nearly half that of a fully functioning coal-burning or nuclear plant that generates 1,000 MW(e) of electric power. In ITER as much as 56 MW(e) of electric power will be consumed by the pumps that circulate the water through some 36 kilometers of nuclear-grade piping.

Operation of any large fusion facility such as ITER is possible only in a location such as the Cadarache region of France, where there is access to many high-power electric grids as well as a high-throughput cool water system. In past decades, the great abundance of freshwater flows and unlimited cold ocean water made it possible to implement large numbers of gigawatt-level thermoelectric power plants. In view of the decreasing availability of freshwater and even cold ocean water worldwide, the difficulty of supplying coolant water would by itself make the future wide deployment of fusion reactors impractical.

ITER’s impact. Whether ITER performs poorly or well, its most favorable legacy is that, like the International Space Station, it will have set an impressive example of decades-long international cooperation among nations both friendly and semi-hostile. Critics charge that international collaboration has greatly amplified the cost and timescale but the $20-to-30 billion cost of ITER is not out of line with the costs of other large nuclear enterprises, such as the power plants that have been approved in recent years for construction in the United States (Summer and Vogtle) and Western Europe (Hinkley and Flamonville), and the US MOX nuclear fuel project in Savannah River. All these projects have experienced a tripling of costs and construction timescales that ballooned from years to decades. The underlying problem is that all nuclear energy facilities—whether fission or fusion—are extraordinarily complex and exorbitantly expensive.

A second invaluable role of ITER will be its definitive influence on energy-supply planning. If successful, ITER may allow physicists to study long-lived, high-temperature fusioning plasmas. But viewed as a prototypical energy producer, ITER will be, manifestly, a havoc-wreaking neutron source fueled by tritium produced in fission reactors, powered by hundreds of megawatts of electricity from the regional electric grid, and demanding unprecedented cooling water resources. Neutron damage will be intensified while the other characteristics will endure in any subsequent fusion reactor that attempts to generate enough electricity to exceed allthe energy sinks identified herein.

When confronted by this reality, even the most starry-eyed energy planners may abandon fusion. Rather than heralding the dawn of a new energy era, it’s likely instead that ITER will perform a role analogous to that of the fission fast breeder reactor, whose blatant drawbacks mortally wounded another professed source of “limitless energy” and enabled the continued dominance of light-water reactors in the nuclear arena.

Together, we make the world safer.

The Bulletin elevates expert voices above the noise. But as an independent nonprofit organization, our operations depend on the support of readers like you. Help us continue to deliver quality journalism that holds leaders accountable. Your support of our work at any level is important. In return, we promise our coverage will be understandable, influential, vigilant, solution-oriented, and fair-minded. Together we can make a difference.

Keywords: Nuclear Fusion Energy

Topics: Analysis, Fusion Energy, Nuclear Energy, Special Topics

Daniel Jassby, the author of this article, may be contacted by Email at “[email protected]”

It would appear that by your implied sarcasm, you do not approve of the experiential nature of the ITER Project and its objectives. I find this surprising from an individual whose career invested a considerable amount of time devoted to research on nuclear fusion experiments at the Princeton Plasma Physics Lab, and whose research interests lay in the areas of plasma physics and neutron production related to fusion-energy research and development. Without decades of R&D, many of todays technologies would not exist.

Consider that it took 3 years to go from the first hint of uranium fission to a working reactor, Enrico Fermi’s Chicago Pile. And only 12 years after WW-II to the first operating commercial nuclear power plant at Shipping Port. Compare that to Fusion, and total discouragement is the only sane response. Nuclear fission is the only economic answer for the foreseeable future. Unfortunately, solid fuel pressurized water reactors (SFPWRs) have proven to be a terrible design. But Molten Salt Reactors (MSRs), designed 60 years ago by the same guy who designed the original SFPWR, Alvin Weinberg, solve all the… Read more »

Dude the point is the Emperor has no clothes. Reread the article. That someone who WAS so involved in hot fusion has reached this conclusion is about as real as it gets.

And yet you would disregard thousands of other individuals, who also work in the field and hold the opposite opinion, for the opinion of one.

jobs are jobs!!…people need jobs…….they will learn alot of really hard lessons and it will cost a lot and pollute a bunch,,,,but they will finally learn that it is only a cool research project…it is just not economically viable for electricity production……advances in fission will easily outrun fusion capabilities…. and be safer…this guy knows the endgame and is trying to educate.

Dan, Its great to hear that you are just as cynical and prescient as when we worked together on fusion reactor designs 40 years ago. Looking back, one does see more clearly. Back in the day we were skeptical that pure fusion, would ever be economically viable, so we thought up ways (hybrids) to boost the output by 10-20 fold. But over the years its become clear that the supposition that pure fusion would even work is suspect. I do, however, still believe in fission, which continues to improve, albiet slowly.

To address reader requests, the Bulletin has assembled in one place a “greatest hits” collection of some of our articles on fusion energy, which date back decades. You can find it at https://thebulletin.org/2019/04/fusions-greatest-hits-as-detailed-in-the-bulletin/

I reckon humanity took a wrong turn chasing after the holy grail of nuclear fusion. How much better we would be if a fraction of the vast sums poured into it over the last 60 years had gone into battery research instead. Right now, to combat climate change, we desperately need something better than lithium-ion batteries in order to power cars, planes and ships, to complement domestic solar systems and to even out the fluctuations in wind and solar power stations. And we still haven’t got anything better than old technology, Li-ion batteries in commercial production. Do we even need… Read more »

Since the sun’s Corona magnetic field has been increasing, explaining warming here, we could use more understanding of these hot plasmas.Our equations are incomplete as usual.

If this technology ever comes to fruition, only the very richest countries will be able to afford it. It will be clean energy for them only insofar as the radioactive waste can be disposed of in poor countries.

The EU loves big expensive and sensationalist projects all without one ounce of practical reasoning. This is no difference between CERN and their massive waste of funds on what was already known before the first shovel hit the ground on LHC to this ITER monstrosity. The only reason ITER has attention is due to the overblown public scare of current nuclear power. All while China today has 11 reactors under active construction, they are building their future while the west is shaking in their boots. The EU could have built MULTIPLE nuclear plants with the funds wasted on ITER let… Read more »

You spent 3 paragraphs lamenting the use of fossil fuels in constructing this facility yet offered no alternatives. I’m not understanding the point, all massive projects require massive energy inputs. Are you suggesting they all be supplied by renewables? Or that they could be renewables? Or we just scrap the project all together? Then you lament that the experimental facility will not generate electricity which, sure is a bummer, but also I was under the impression that the whole point of prototypes / proof of concept was that they might not be used for commercial use / production? The world… Read more »

The project is now employing its third generation of plasma physicists. It is for projects like this that they chose their careers.

The other part of the answer requires a reflection of the 70 years during which three generations of scientists have been working on this goal. They have been nearly exclusively focusing on the challenge from the perspective of physics, and basically ignoring all matters of engineering. Expressing dissent, as Daniel has done here, has won him very few friends. But he’s not the only one who has had the clarity and the courage to speak against the tide. The first to do this was Robert L. Hirsch, who headed the US federal fusion program from 1972-1976. Look at what he… Read more »

I greatly appreciated this critical analysis that covered many issues that are typically never addressed by hyped press releases and popular articles that are intended to feed our desires for hope.

When many people fall in love with a person, or a technology, they are often blinded to flaws that might be present in those subjects. It is natural for people to gravitate to the information sources that reinforce their deeply entrenched beliefs while spurning information that isn’t supportive. This article exposed many of the issues that lovers of fusion power tend to be blind to.

I am a product of a literal “nuclear family” in Livermore, headed by a physicist. Though not pursuing science myself, I am sure that my father (as well as any REAL scientists who would be primarily inclined to deductively reason through the facts) would indeed be highly skeptical of fusion as an electrical energy savior. It was clear decades ago that the same sort of monumental obstacles were likely to lead to insurmountable engineering challenges that are so well articulated in this article. So now, the self-interested are willing to put profit ahead of the truth. I see it here… Read more »

Doug, well said. May I add that the situation is actually worse. While pitching the idea of ITER, the promoters of ITER lied (a lie of omission) about the input power requirement, thus lying about the expected net output.

http://news.newenergytimes.net/2020/11/14/omitting-the-iter-input-power-bigot-and-coblentzs-roles/

We already have a vast nuclear reactor avalable to us which will last billions of years and is free. We know how to use it to make electricty and the required components are getting better and cheaper. The notion that we need to build our own fusion reactor is about as silly as saying we need to manufacture air. How many roofs could have installed panels and how much could have been spent on improving battery technology if we stopped wasting tens of billions on what we don’t need at all? Why do we keep building hydro electric facilities and… Read more »

All one need do is obtain a copy of the Princeton study on Tokamak reactors (published in the late ’60s or early 70s) to understand why it is unlikely fusion will ever be a practical means of electrical power generation. And so far, I have not seen mention anywhere in the current literature that a fusion-based economy would require multiples of the known world reserves of several critical metals, including cobalt. But then again, so would an economy based on windmills, solar panels, and batteries…..so who cares about trivialities such as resource scarcity?

I’d love to hear your views on other tokamak and fusion projects. The article to me seems to be more of a criticism of ITER rather than fusion itself, not surprising given its vast scale. JET and MAST are far smaller scale, but no one ever seems to talk about the potential of private companies such as Tokamak Energy, with ST40.

Apart from all the points Dan makes, there is the following: ITER, somewhere on their website, refer to their experiment as the most complex machine ever built (or some such description). I daresay this may be true. Now put yourself in the shoes of a power generating utility: Who, in God’s name, would want to generate electric power for profit using the “most complex machine ever built”? For heaven’s sake, utilities can barely cope with comparatively simple fission plants. And given all the items missing from ITER, such as self-sustaining tritium breeding, conversion of fusion products into electric power, ability… Read more »